1 Welcome and Overview

This course is one of three that we are currently offering, covering fundamentals of open data sharing, reproducible research, ethical data use and reuse, and scalable computing for reusing large data sets.

1.0.1 Objectives

- Welcome and Introductions

- Mission and structure of the Arctic Data Center

- Plan for the Scalable Computing Course

1.1 Arctic Data Center Overview

The Arctic Data Center is the primary data and software repository for the Arctic section of National Science Foundation’s Office of Polar Programs (NSF OPP).

We’re best known in the research community as a data archive – researchers upload their data to preserve it for the future and make it available for re-use. This isn’t the end of that data’s life, though. These data can then be downloaded for different analyses or synthesis projects. In addition to being a data discovery portal, we also offer top-notch tools, support services, and training opportunities. We also provide data rescue services.

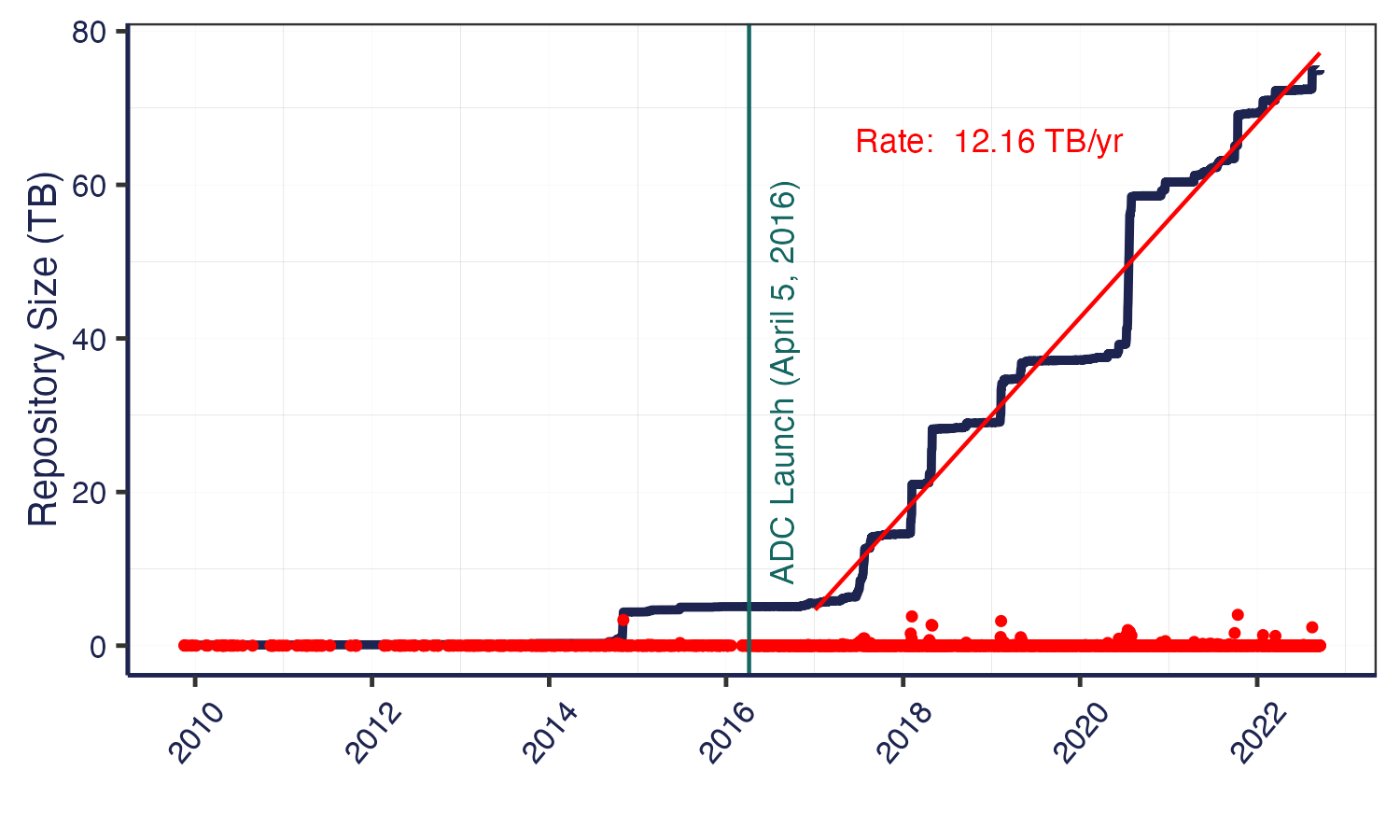

NSF has long had a commitment to data reuse and sharing. Since our start in 2016, we’ve grown substantially – from that original 4 TB of data from ACADIS to now over 71 TB. In 2021 alone, we saw 16% growth in dataset count, and about 30% growth in data volume. This increase has come from advances in tools – both ours and of the scientific community, plus active community outreach and a strong culture of data preservation from NSF and from researchers. We plan to add more storage capacity in the coming months, as researchers are coming to us with datasets in the terabytes, and we’re excited to preserve these research products in our archive. We’re projecting our growth to be around several hundred TB this year, which has a big impact on processing time. Give us a heads up if you’re planning on having larger submissions so that we can work with you and be prepared for a large influx of data.

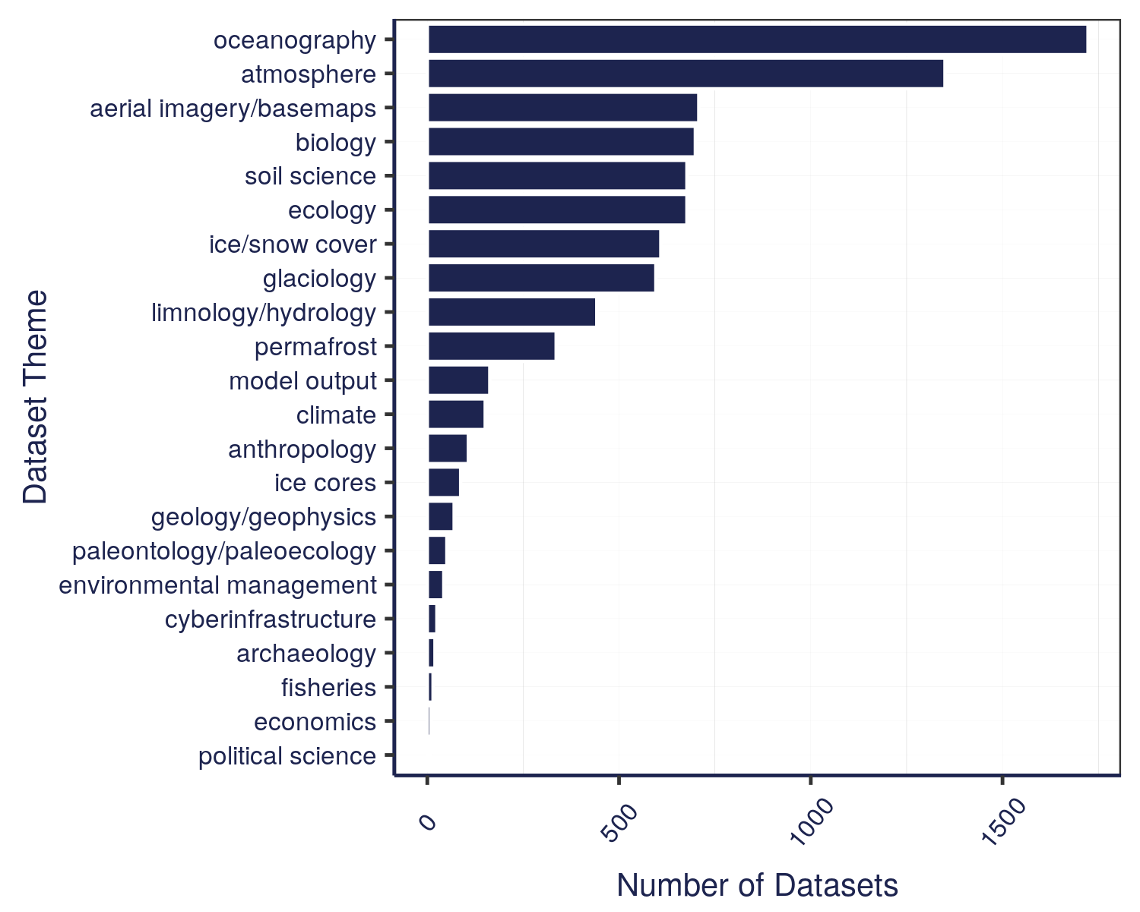

The data that we have in the Arctic Data Center comes from a wide variety of disciplines. These different programs within NSF all have different focuses – the Arctic Observing Network supports scientific and community-based observations of biodiversity, ecosystems, human societies, land, ice, marine and freshwater systems, and the atmosphere as well as their social, natural, and/or physical environments, so that encompasses a lot right there in just that one program. We’re also working on a way right now to classify the datasets by discipline, so keep an eye out for that coming soon.

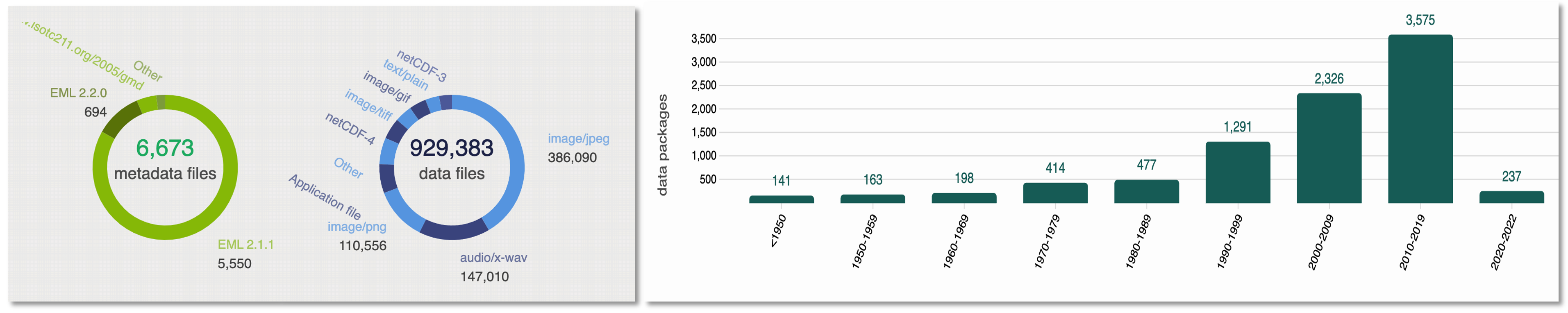

Along with that diversity of disciplines comes a diversity of file types. The most common file type we have are image files in four different file types. Probably less than 200-300 of the datasets have the majority of those images – we have some large datasets that have image and/or audio files from drones. Most of those 6600+ datasets are tabular datasets. There’s a large diversity of data files, though, whether you want to look at remote sensing images, listen to passive acoustic audio files, or run applications – or something else entirely. We also cover a long period of time, at least by human standards. The data represented in our repository spans across centuries.

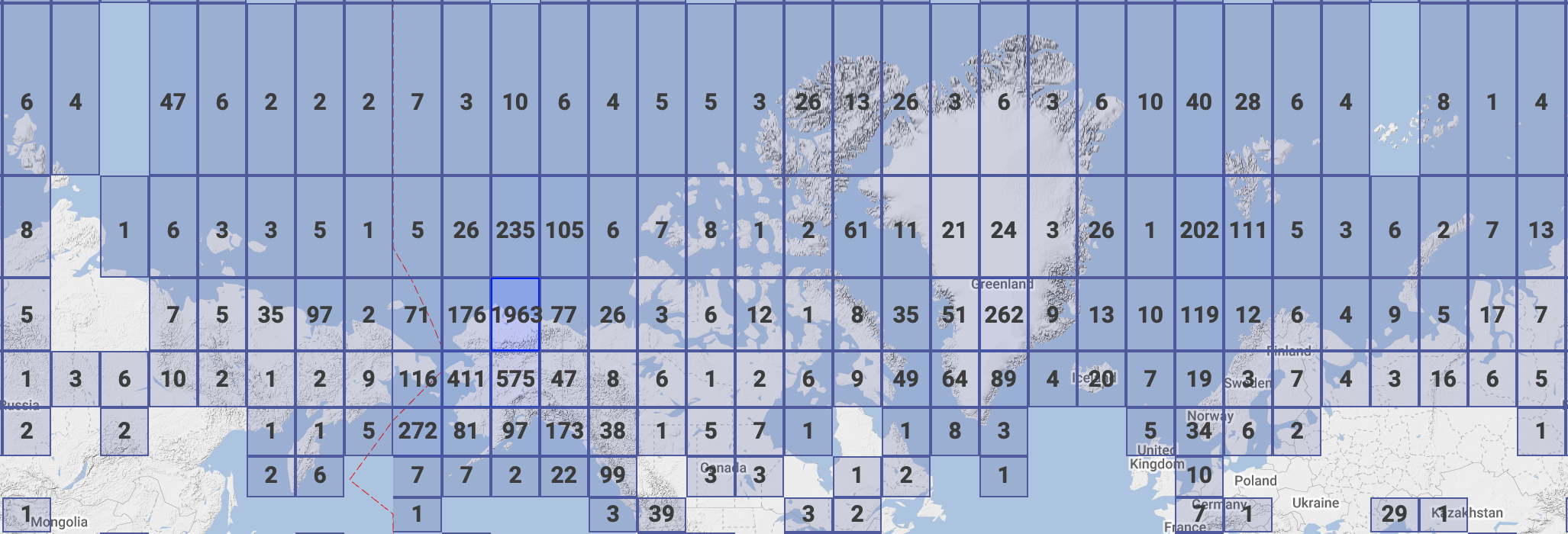

We also have data that spans the entire Arctic, as well as the sub-Arctic, regions.

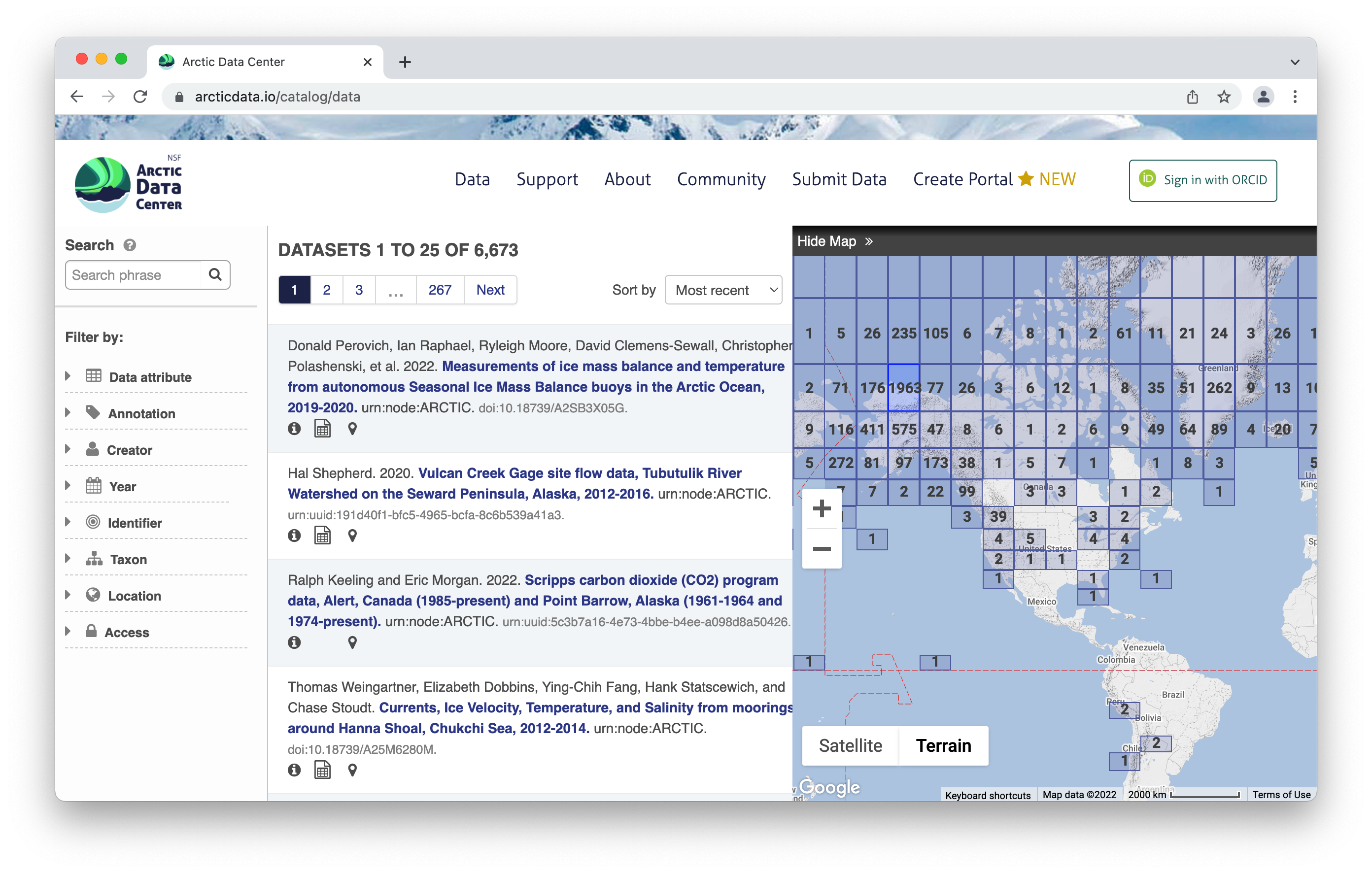

1.1.1 Data Discovery Portal

To browse the data catalog, navigate to arcticdata.io. Go to the top of the page and under data, go to search. Right now, you’re looking at the whole catalog. You can narrow your search down by the map area, a general search, or searching by an attribute.

Clicking on a dataset brings you to this page. You have the option to download all the files by clicking the green “Download All” button, which will zip together all the files in the dataset to your Downloads folder. You can also pick and choose to download just specific files.

All the raw data is in open formats to make it easily accessible and compliant with FAIR principles – for example, tabular documents are in .csv (comma separated values) rather than Excel documents.

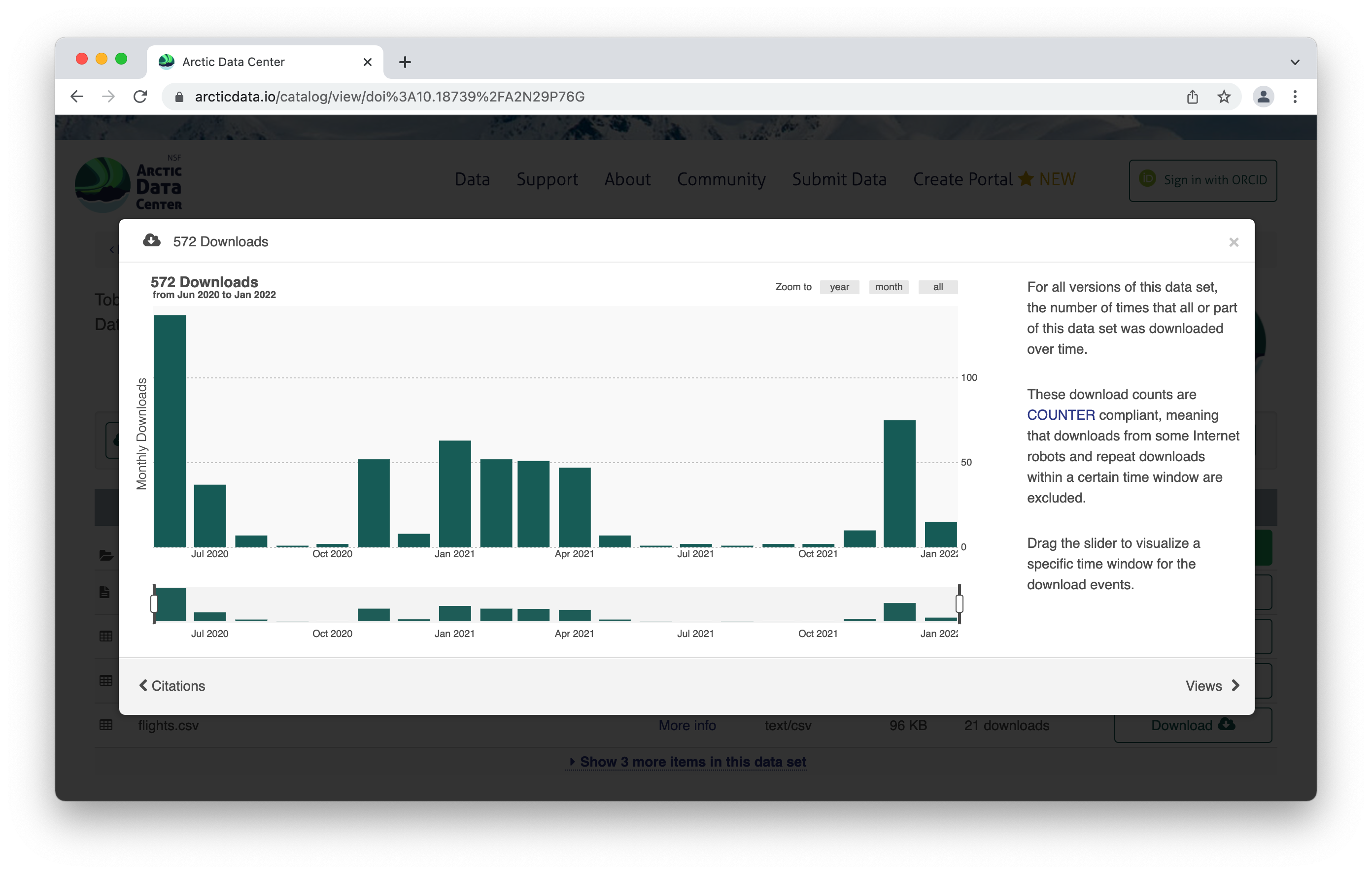

The metrics at the top give info about the number of citations with this data, the number of downloads, and the number of views. This is what it looks like when you click on the Downloads tab for more information.

Scroll down for more info about the dataset – abstract, keywords. Then you’ll see more info about the data itself. This shows the data with a description, as well as info about the attributes (or variables or parameters) that were measured. The green check mark indicates that those attributes have been annotated, which means the measurements have a precise definition to them. Scrolling further, we also see who collected the data, where they collected it, and when they collected it, as well as any funding information like a grant number. For biological data, there is the option to add taxa.

1.1.2 Tools and Infrastructure

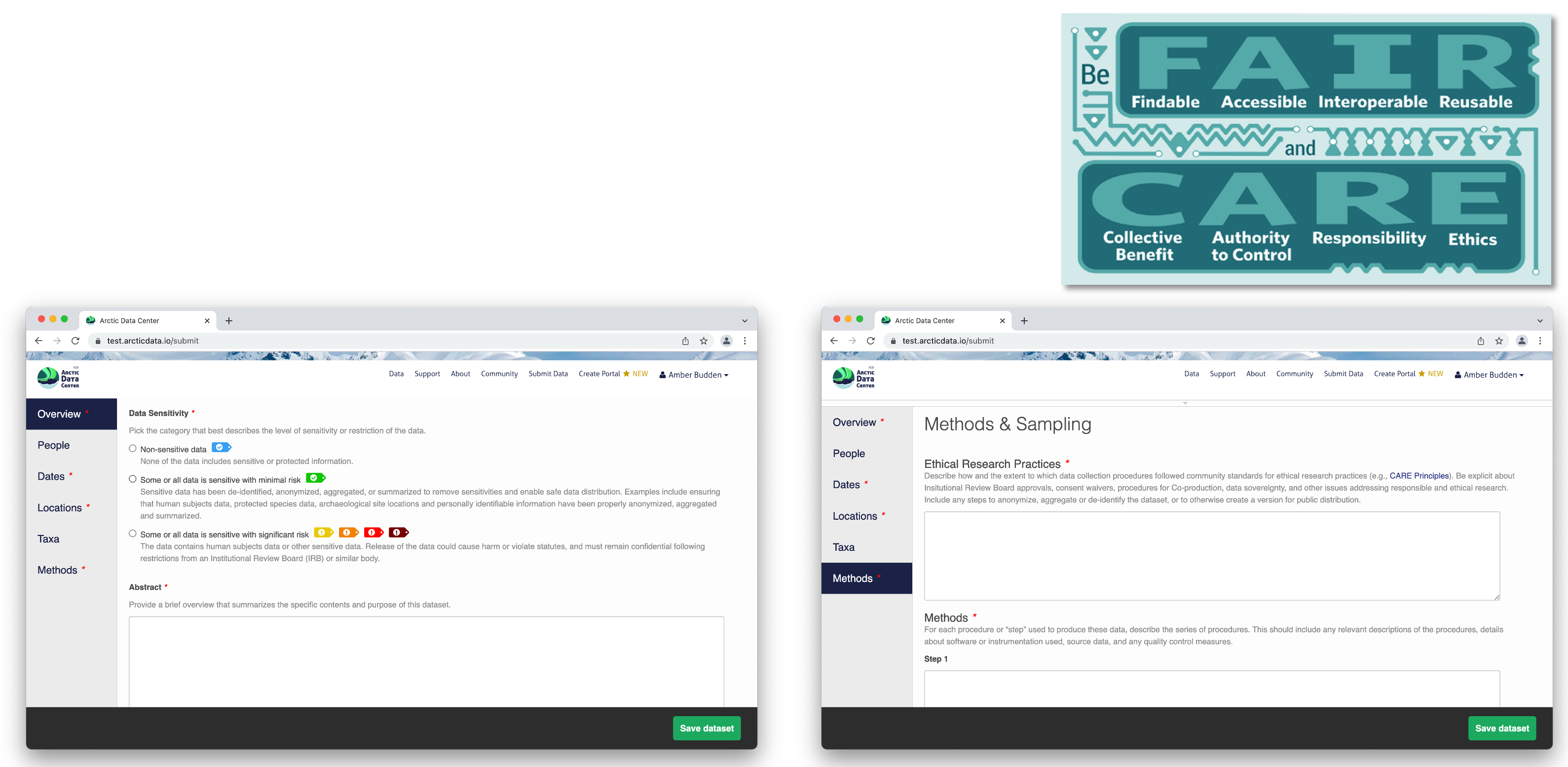

Across all our services and partnership, we are strongly aligned with the community principles of making data FAIR (Findable, Accesible, Interoperable and Reusable).

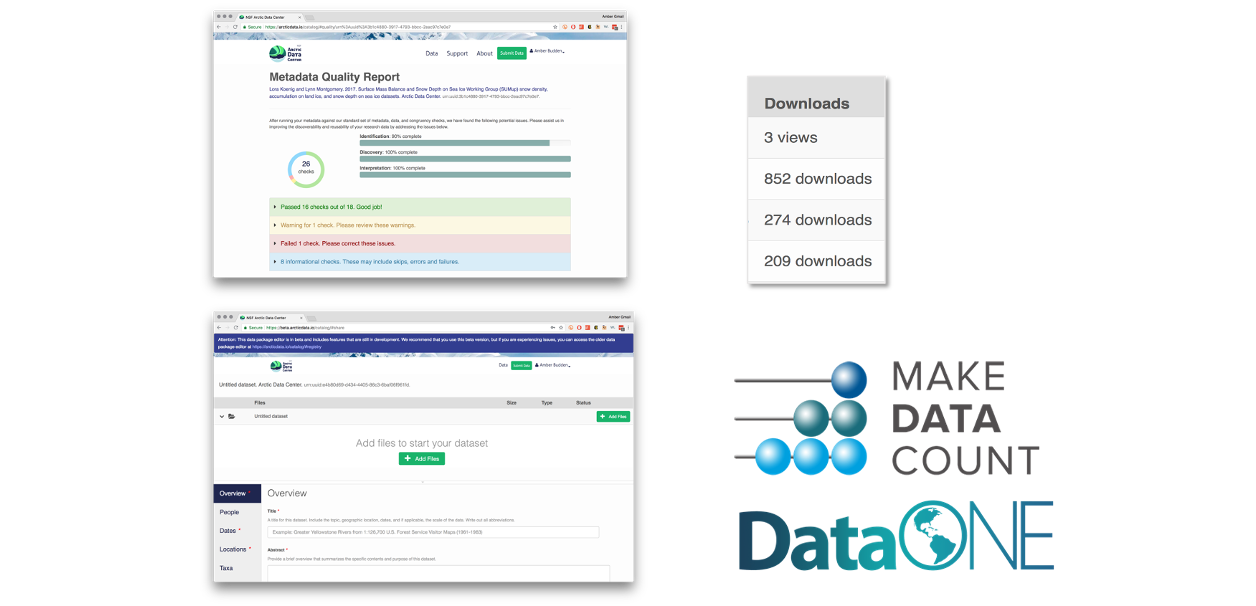

We have a number of tools available to submitters and researchers who are there to download data. We also partner with other organizations, like Make Data Count and DataONE, and leverage those partnerships to create a better data experience.

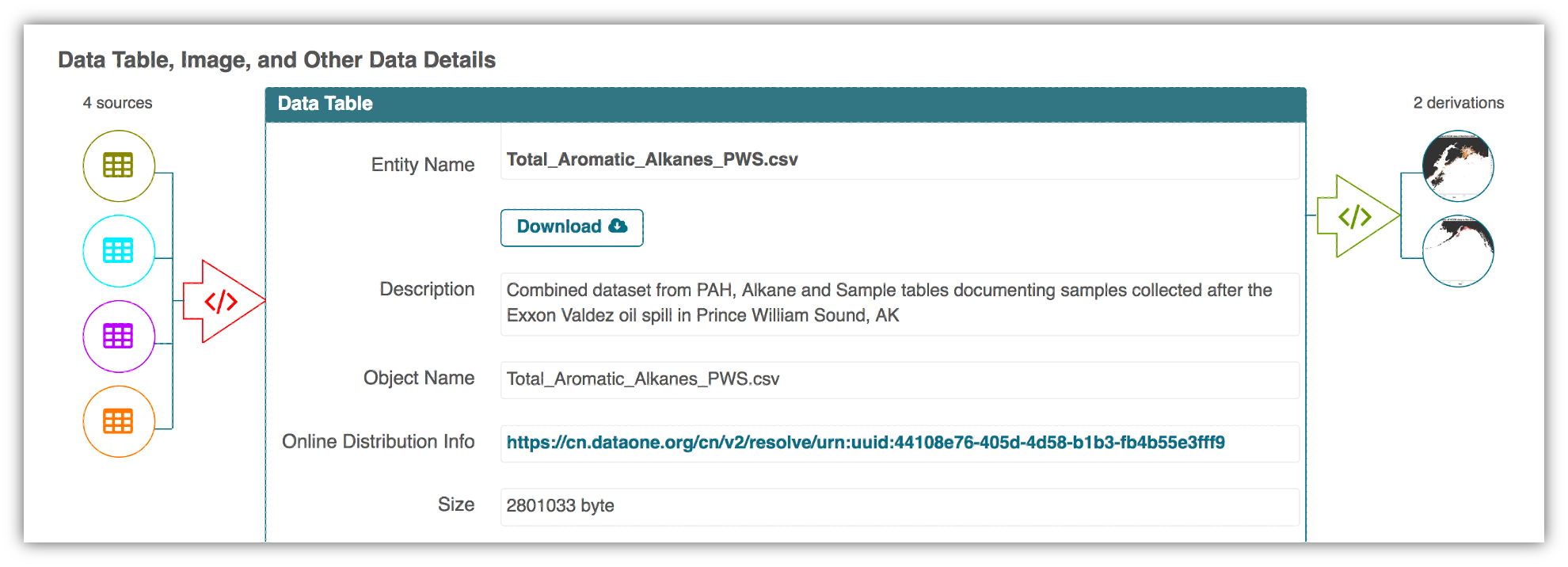

One of those tools is provenance tracking. With provenance tracking, users of the Arctic Data Center can see exactly what datasets led to what product, using the particular script that the researcher ran.

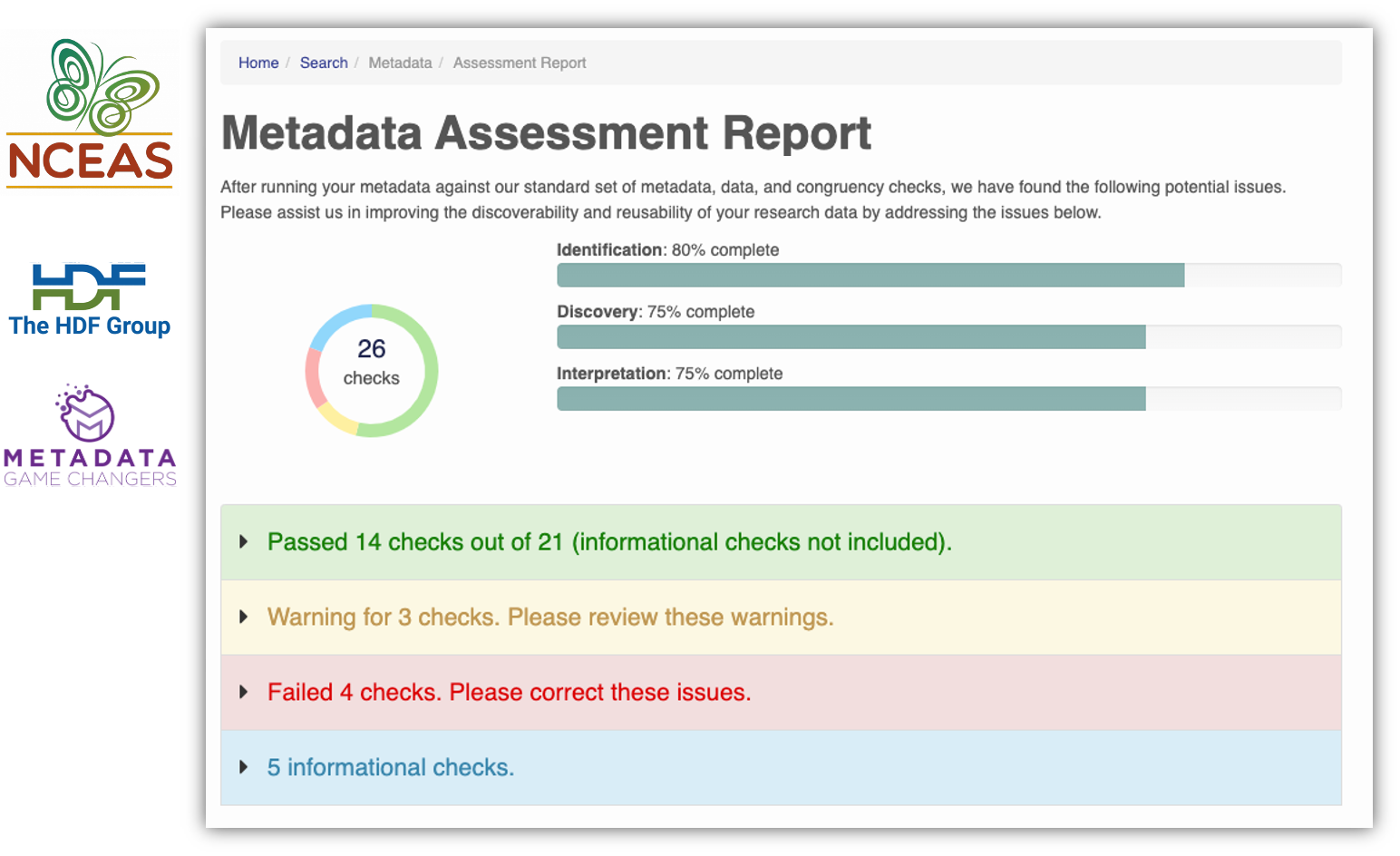

Another tool are our Metadata Quality Checks. We know that data quality is important for researchers to find datasets and to have trust in them to use them for another analysis. For every submitted dataset, the metadata is run through a quality check to increase the completeness of submitted metadata records. These checks are seen by the submitter as well as are available to those that view the data, which helps to increase knowledge of how complete their metadata is before submission. That way, the metadata that is uploaded to the Arctic Data Center is as complete as possible, and close to following the guideline of being understandable to any reasonable scientist.

1.1.3 Support Services

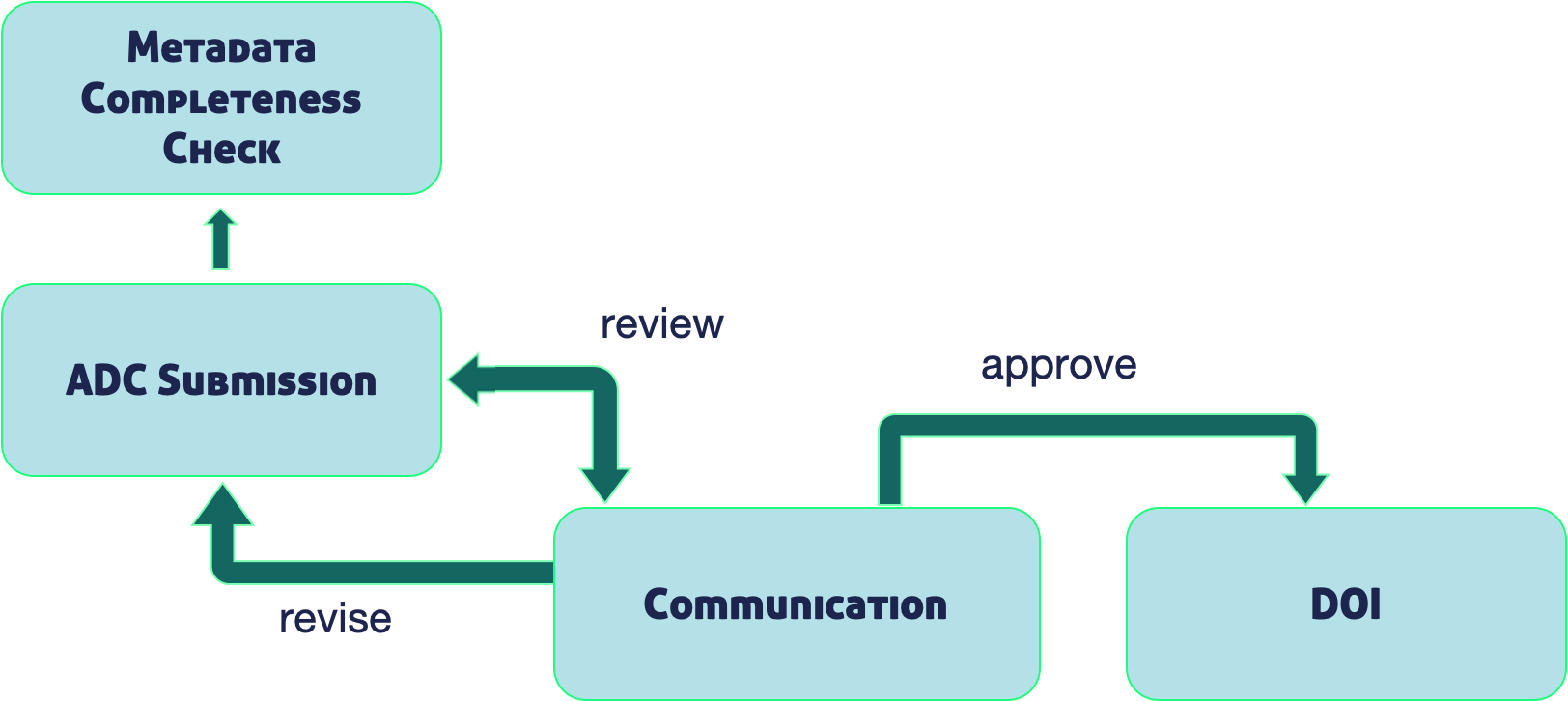

Metadata quality checks are the automatic way that we ensure quality of data in the repository, but the real quality and curation support is done by our curation team. The process by which data gets into the Arctic Data Center is iterative, meaning that our team works with the submitter to ensure good quality and completeness of data. When a submitter submits data, our team gets a notification and beings to evaluate the data for upload. They then go in and format it for input into the catalog, communicating back and forth with the researcher if anything is incomplete or not communicated well. This process can take anywhere from a few days or a few weeks, depending on the size of the dataset and how quickly the researcher gets back to us. Once that process has been completed, the dataset is published with a DOI (digital object identifier).

1.1.4 Training and Outreach

In addition to the tools and support services, we also interact with the community via trainings like this one and outreach events. We run workshops at conferences like the American Geophysical Union, Arctic Science Summit Week and others. We also run an intern and fellows program, and webinars with different organizations. We’re invested in helping the Arctic science community learn reproducible techniques, since it facilitates a more open culture of data sharing and reuse.

We strive to keep our fingers on the pulse of what researchers like yourselves are looking for in terms of support. We’re active on Twitter to share Arctic updates, data science updates, and specifically Arctic Data Center updates, but we’re also happy to feature new papers or successes that you all have had with working with the data. We can also take data science questions if you’re running into those in the course of your research, or how to make a quality data management plan. Follow us on Twitter and interact with us – we love to be involved in your research as it’s happening as well as after it’s completed.

1.1.5 Data Rescue

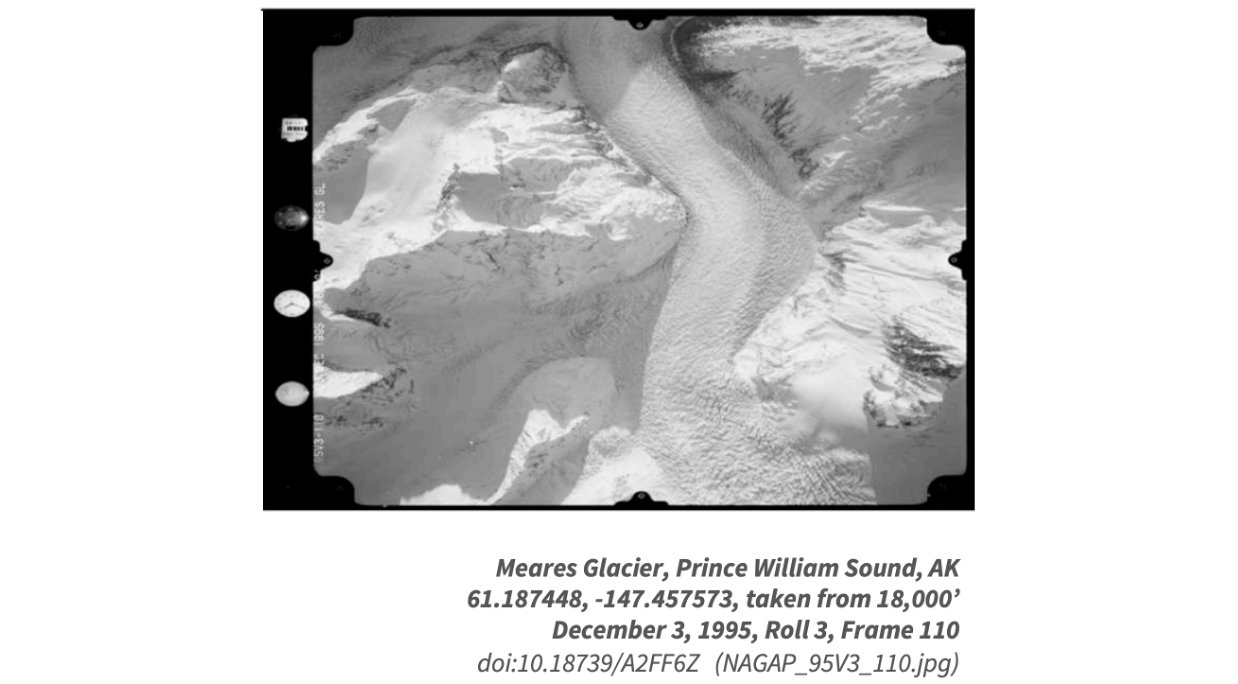

We also run data rescue operations. We digitiazed Autin Post’s collection of glacier photos that were taken from 1964 to 1997. There were 100,000+ files and almost 5 TB of data to ingest, and we reconstructed flight paths, digitized the images of his notes, and documented image metadata, including the camera specifications.

1.1.6 Who Must Submit

Projects that have to submit their data include all Arctic Research Opportunities through the NSF Office of Polar Programs. That data has to be uploaded within two years of collection. The Arctic Observing Network has a shorter timeline – their data products must be uploaded within 6 months of collection. Additionally, we have social science data, though that data often has special exceptions due to sensitive human subjects data. At the very least, the metadata has to be deposited with us.

Arctic Research Opportunities (ARC)

- Complete metadata and all appropriate data and derived products

- Within 2 years of collection or before the end of the award, whichever comes first

ARC Arctic Observation Network (AON)

- Complete metadata and all data

- Real-time data made public immediately

- Within 6 months of collection

Arctic Social Sciences Program (ASSP)

- NSF policies include special exceptions for ASSP and other awards that contain sensitive data

- Human subjects, governed by an Institutional Review Board, ethically or legally sensitive, at risk of decontextualization

- Metadata record that documents non-sensitive aspects of the project and data

- Title, Contact information, Abstract, Methods

For more complete information see our “Who Must Submit” webpage

Recognizing the importance of sensitive data handling and of ethical treatment of all data, the Arctic Data Center submission system provides the opportunity for researchers to document the ethical treatment of data and how collection is aligned with community principles (such as the CARE principles). Submitters may also tag the metadata according to community develop data sensitivity tags. We will go over these features in more detail shortly.

1.1.7 Supporting data reuse

As the Arctic Data Center has grown in size, we have envisioned new capabilities to support efficient reuse of these valuable data. We are developing new tools both within the Arctic Data Center, and through collaborative projects with other researchers in the community. In house, some of the new features we plan to support include:

- Efficient submission and access to multi-Terabyte datasets

- Data Quality assessment services

- Automated workflows for building derived data products

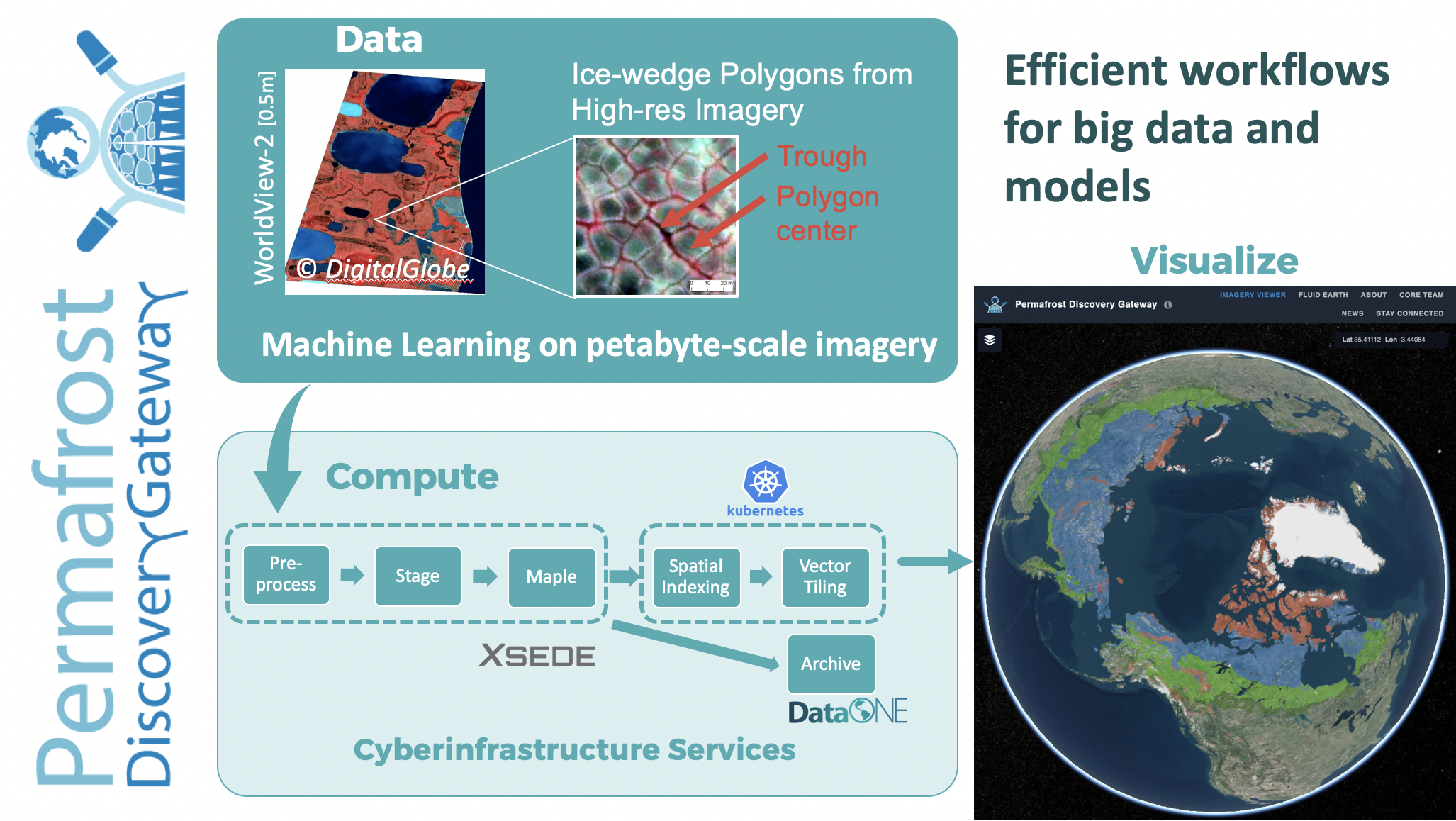

As a concrete example, we are collaborating with the Permafrost Discovery Gateway to build new capacity for computing and visualization at sub-meter spatial resolution across the global Arctic. The services we are building support workflows for pre-processing massive image data collections to prepare them for modeling, as well as automating machine learning and other computations across large data on distributed compute clusters, and finally post-processing the results to create viable pathways for results mapping and visualization at global scales.

1.1.8 Summary

All the above informtion can be found on our website or if you need help, ask our support team at support@arcticdata.io or tweet us @arcticdatactr!

6,958 Datasets

2,584 Creators

71.4 TB

16,162 Users

1.2 Scalable Computing Topics

The overall objective of this course is to facilitate effective analysis and modeling of the massive data resources that we curate for the Arctic research community. We assume a baseline proficiency, and plan to build on that to better support time-consuming computations with multi-terabyte datasets.

Activities will include:

- Technical tutorials with a lot of hands-on time in Python

- Lectures and discussions on core concepts for scalable computing

- Semi-structured group projects to practice and cement key skills

Key topics will include:

- Arctic Data Center services and tools

- Overview and review of core computing concepts

- Fundamentals of concurrent programming

- concurrent.futures

- Parsl

- Dask

- Fundamentals of working with big data and imagery

- Good practices in research software design for efficiency, reproducibility, and reuse

- Fundamentals of cloud computing

- Docker, containers, reproducibility, and more

As this is a lot to cover in a short survey course, we will break it down to fundamentals daily, and provide ample time for hands-on practice.