3.1 Learning Objectives

In this lesson, you will learn:

- About open data archives, especially the Arctic Data Center

- What science metadata are and how they can be used

- How data and code can be documented and published in open data archives

- Web-based submission

3.2 Data sharing and preservation

3.2.1 Data repositories: built for data (and code)

- GitHub is not an archival location

- Examples of dedicated data repositories: KNB, Arctic Data Center, tDAR, EDI, Zenodo

- Rich metadata

- Archival in their mission

- Certification for repositories: https://www.coretrustseal.org/

- Data papers, e.g., Scientific Data

- List of data repositories: http://re3data.org

- Repository finder tool: https://repositoryfinder.datacite.org/

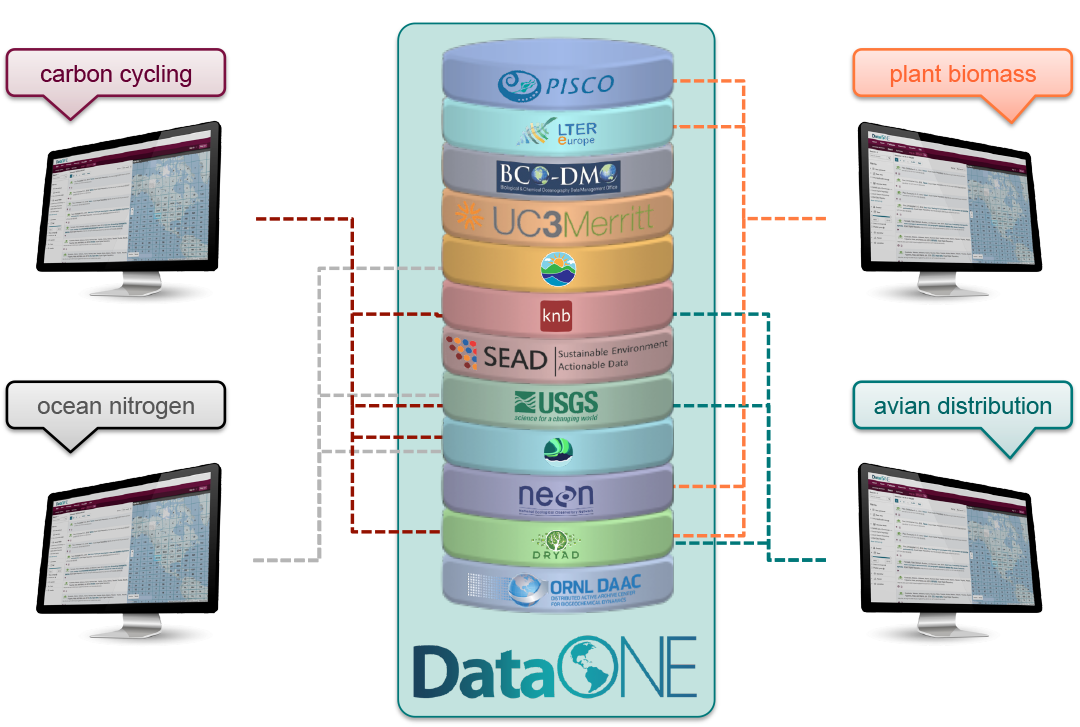

3.2.2 DataONE Federation

DataONE is a federation of dozens of data repositories that work together to make their systems interoperable and to provide a single unified search system that spans the repositories. DataONE aims to make it simpler for researchers to publish data to one of its member repositories, and then to discover and download that data for reuse in synthetic analyses.

DataONE can be searched on the web (https://search.dataone.org/), which effectively allows a single search to find data from the dozens of members of DataONE, rather than visiting each of the (currently 44!) repositories one at a time.

3.3 Metadata

Metadata are documentation describing the content, context, and structure of data to enable future interpretation and reuse of the data. Generally, metadata describe who collected the data, what data were collected, when and where they were collected, and why they were collected.

For consistency, metadata are typically structured following metadata content standards such as the Ecological Metadata Language (EML). For example, here’s an excerpt of the metadata for a sockeye salmon dataset:

<?xml version="1.0" encoding="UTF-8"?>

<eml:eml packageId="df35d.442.6" system="knb"

xmlns:eml="eml://ecoinformatics.org/eml-2.1.1">

<dataset>

<title>Improving Preseason Forecasts of Sockeye Salmon Runs through

Salmon Smolt Monitoring in Kenai River, Alaska: 2005 - 2007</title>

<creator id="1385594069457">

<individualName>

<givenName>Mark</givenName>

<surName>Willette</surName>

</individualName>

<organizationName>Alaska Department of Fish and Game</organizationName>

<positionName>Fishery Biologist</positionName>

<address>

<city>Soldotna</city>

<administrativeArea>Alaska</administrativeArea>

<country>USA</country>

</address>

<phone phonetype="voice">(907)260-2911</phone>

<electronicMailAddress>mark.willette@alaska.gov</electronicMailAddress>

</creator>

...

</dataset>

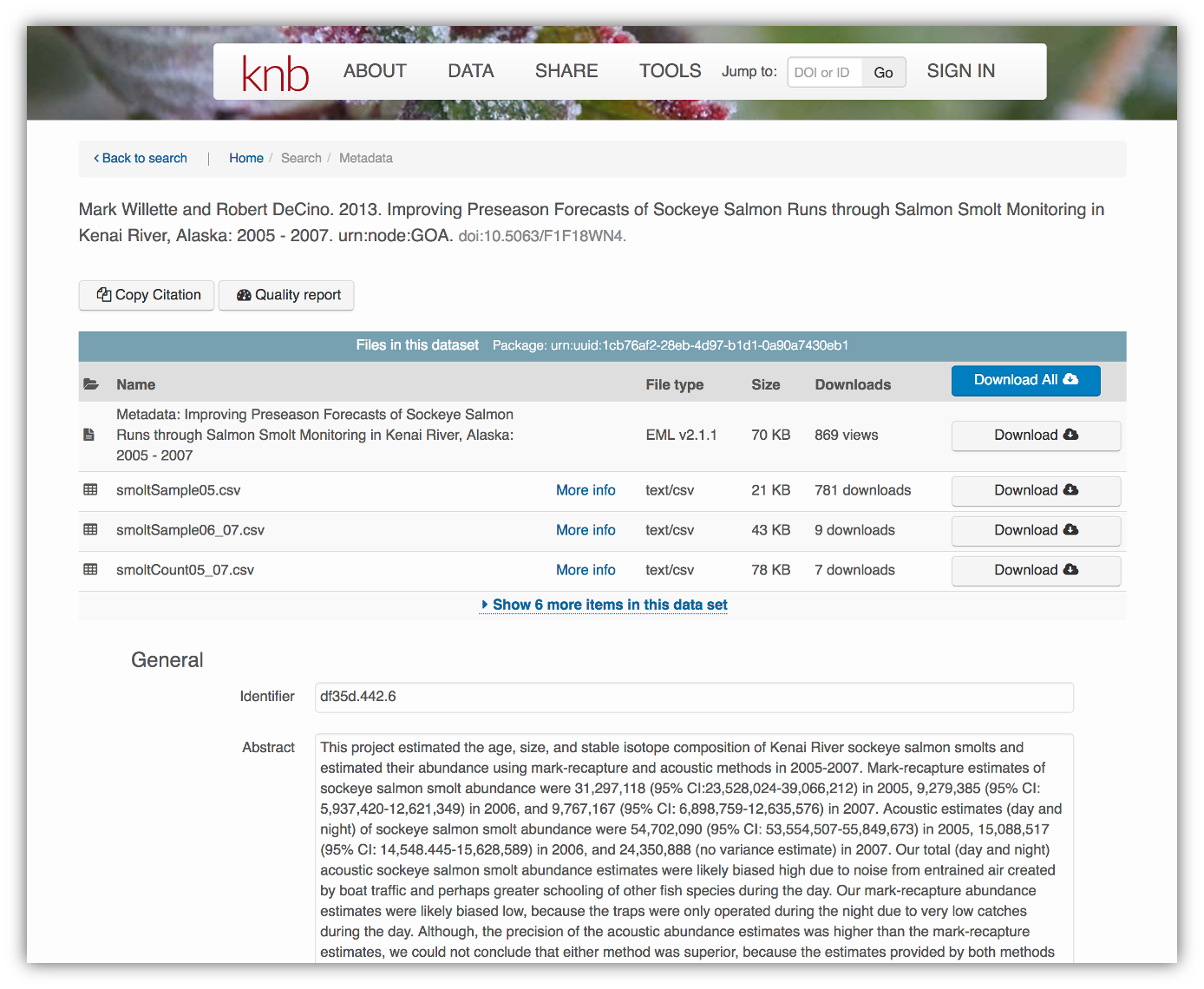

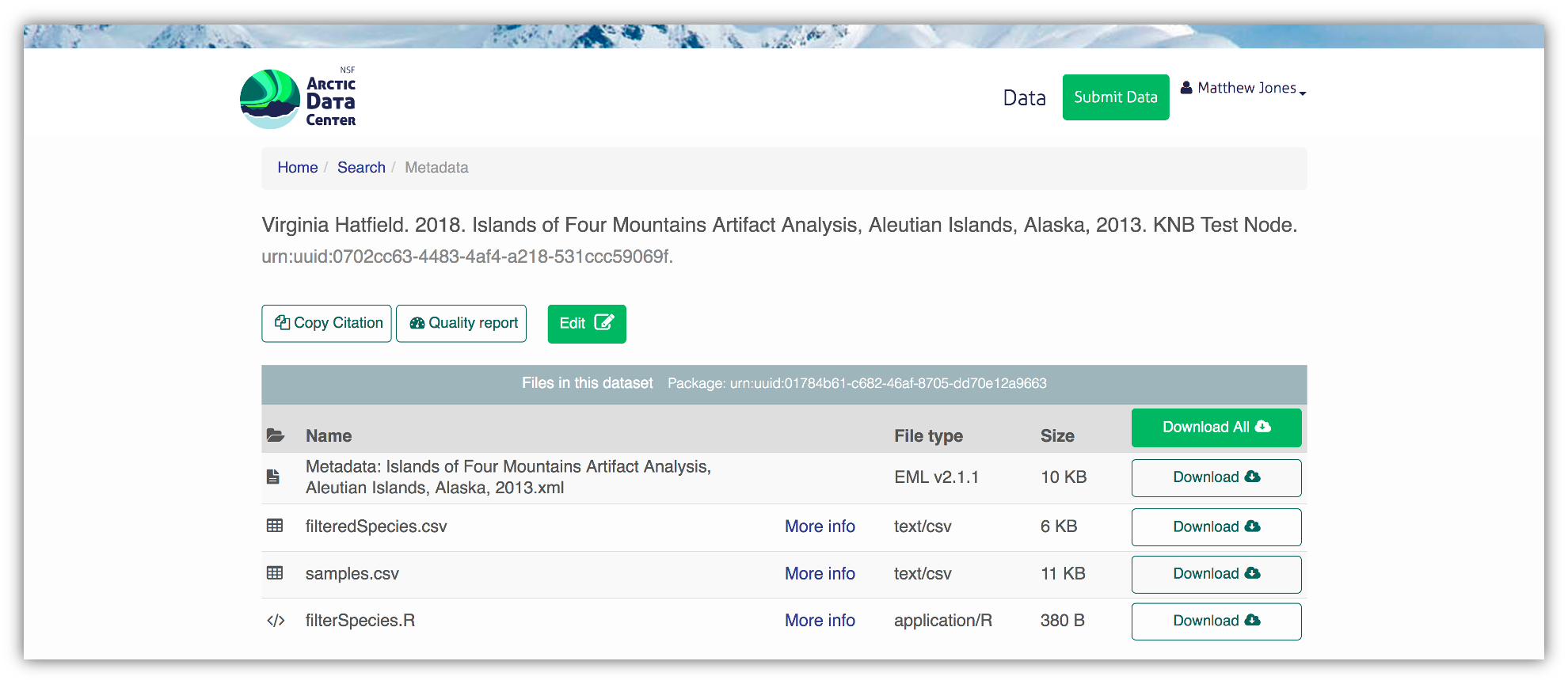

</eml:eml>That same metadata document can be converted to HTML format and displayed in a much more readable form on the web: https://knb.ecoinformatics.org/#view/doi:10.5063/F1F18WN4

And, as you can see, the whole dataset or its components can be downloaded and reused.

And, as you can see, the whole dataset or its components can be downloaded and reused.

Also note that the repository tracks how many times each file has been downloaded, which gives great feedback to researchers on the activity for their published data.

3.3.1 Overall Guidelines

Another way to think about metadata is to answer the following questions with the documentation:

- What was measured?

- Who measured it?

- When was it measured?

- Where was it measured?

- How was it measured?

- How is the data structured?

- Why was the data collected?

- Who should get credit for this data (researcher AND funding agency)?

- How can this data be reused (licensing)?

3.3.2 Bibliographic Guidelines

The details that will help your data be cited correctly are:

- Global identifier like a digital object identifier (DOI)

- Descriptive title that includes information about the topic, the geographic location, the dates, and if applicable, the scale of the data

- Descriptive abstract that serves as a brief overview off the specific contents and purpose of the data package

- Funding information like the award number and the sponsor

- People and organizations like the creator of the dataset (i.e. who should be cited), the person to contact about the dataset (if different than the creator), and the contributors to the dataset

3.3.3 Discovery Guidelines

The details that will help your data be discovered correctly are:

- Geospatial coverage of the data, including the field and laboratory sampling locations, place names and precise coordinates

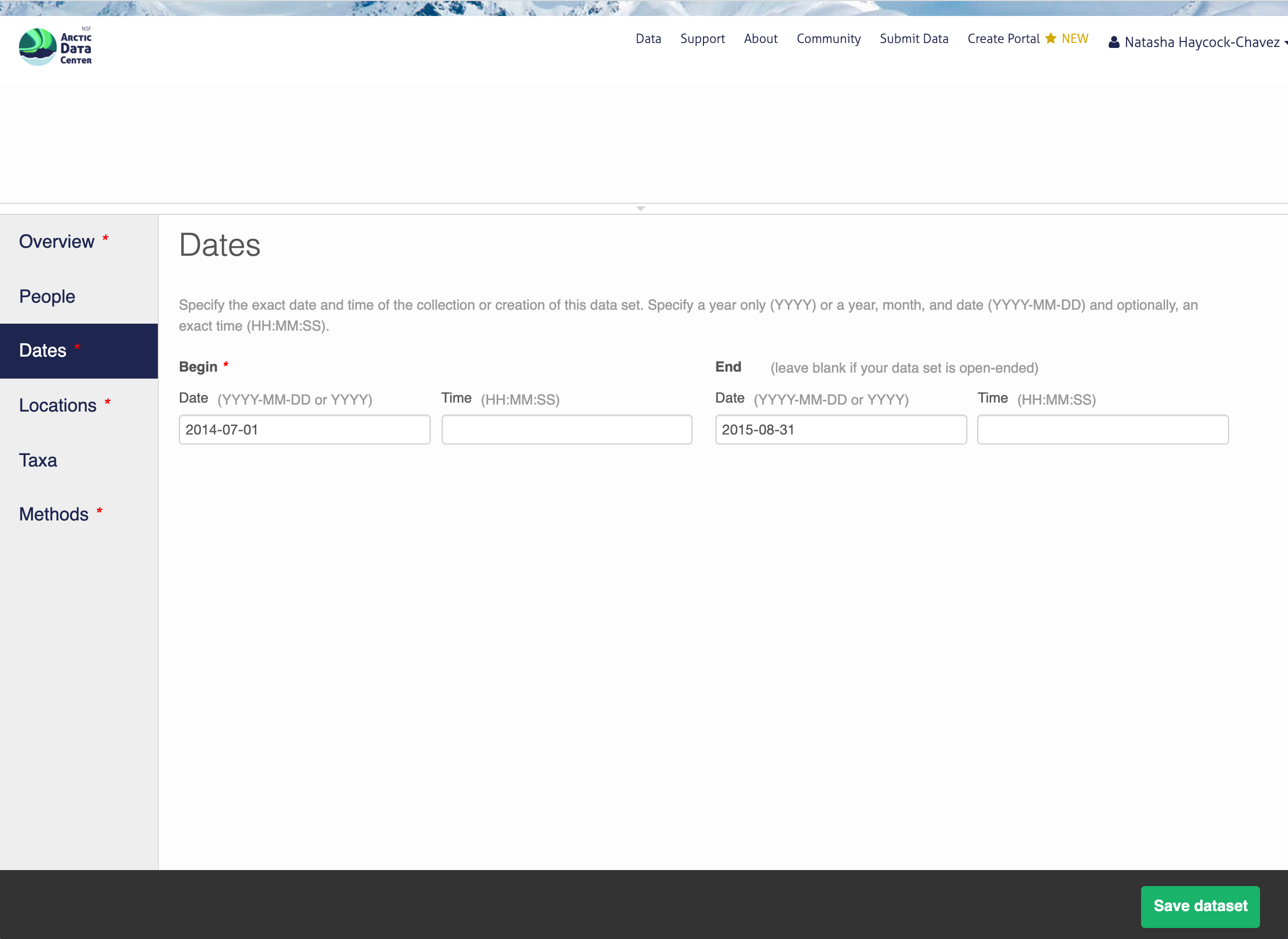

- Temporal coverage of the data, including when the measurements were made and what time period (ie the calendar time or the geologic time) the measurements apply to

- Taxonomic coverage of the data, including what species were measured and what taxonomy standards and procedures were followed

- Any other contextual information as needed

3.3.4 Interpretation Guidelines

The details that will help your data be interpreted correctly are:

- Collection methods for both field and laboratory data the full experimental and project design as well as how the data in the dataset fits into the overall project

- Processing methods for both field and laboratory samples

- All sample quality control procedures

- Provenance information to support your analysis and modelling methods

- Information about the hardware and software used to process your data, including the make, model, and version

- Computing quality control procedures like testing or code review

3.3.5 Data Structure and Contents

- Everything needs a description: the data model, the data objects (like tables, images, matrices, spatial layers, etc), and the variables all need to be described so that there is no room for misinterpretation.

- Variable information includes the definition of a variable, a standardized unit of measurement, definitions of any coded values (i.e. 0 = not collected), and any missing values (i.e. 999 = NA).

Not only is this information helpful to you and any other researcher in the future using your data, but it is also helpful to search engines. The semantics of your dataset are crucial to ensure your data is both discoverable by others and interoperable (that is, reusable).

For example, if you were to search for the character string “carbon dioxide flux” in a data repository, not all relevant results will be shown due to varying vocabulary conventions (i.e., “CO2 flux” instead of “carbon dioxide flux”) across disciplines — only datasets containing the exact words “carbon dioxide flux” are returned. With correct semantic annotation of the variables, your dataset that includes information about carbon dioxide flux but that calls it CO2 flux WOULD be included in that search.

3.3.6 Rights and Attribution

Correctly assigning a way for your datasets to be cited and reused is the last piece of a complete metadata document. This section sets the scientific rights and expectations for the future on your data, like:

- Citation format to be used when giving credit for the data

- Attribution expectations for the dataset

- Reuse rights, which describe who may use the data and for what purpose

- Redistribution rights, which describe who may copy and redistribute the metadata and the data

- Legal terms and conditions like how the data are licensed for reuse.

3.3.7 Metadata Standards

So, how does a computer organize all this information? There are a number of metadata standards that make your metadata machine readable and therefore easier for data curators to publish your data.

- Ecological Metadata Language (EML)

- Geospatial Metadata Standards (ISO 19115 and ISO 19139)

- Dublin Core

- Darwin Core

- PREservation Metadata: Implementation Strategies (PREMIS)

- Metadata Encoding Transmission Standard (METS)

Note this is not an exhaustive list.

3.3.8 Data Identifiers

Many journals require a DOI (a digital object identifier) be assigned to the published data before the paper can be accepted for publication. The reason for that is so that the data can easily be found and easily linked to.

Some data repositories assign a DOI for each dataset you publish on their repository. But, if you need to update the datasets, check the policy of the data repository. Some repositories assign a new DOI after you update the dataset. If this is the case, researchers should cite the exact version of the dataset that they used in their analysis, even if there is a newer version of the dataset available.

3.3.9 Data Citation

Researchers should get in the habit of citing the data that they use (even if it’s their own data!) in each publication that uses that data.

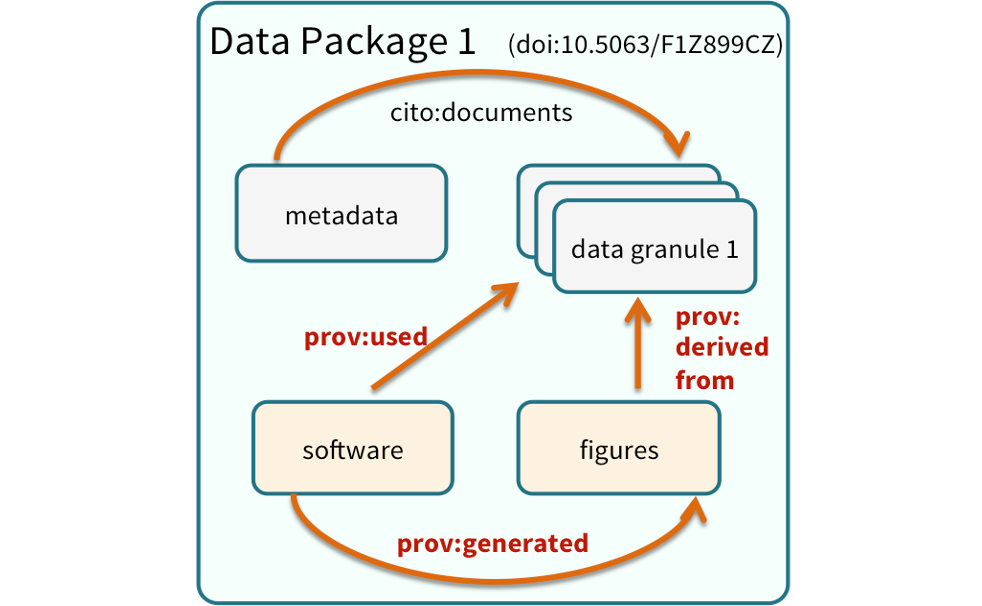

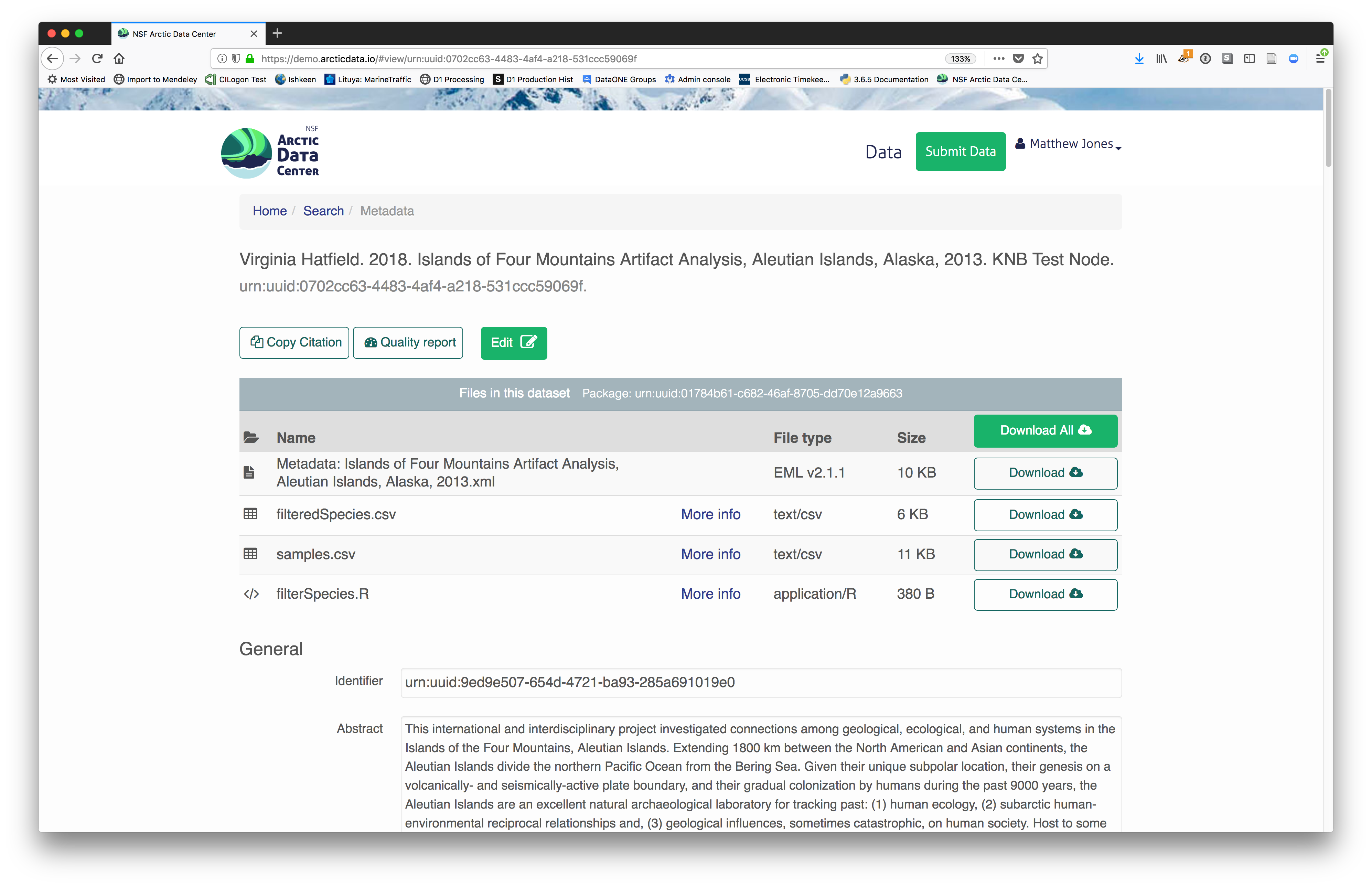

3.4 Structure of a data package

Note that the dataset above lists a collection of files that are contained within the dataset. We define a data package as a scientifically useful collection of data and metadata that a researcher wants to preserve. Sometimes a data package represents all of the data from a particular experiment, while at other times it might be all of the data from a grant, or on a topic, or associated with a paper. Whatever the extent, we define a data package as having one or more data files, software files, and other scientific products such as graphs and images, all tied together with a descriptive metadata document.

These data repositories all assign a unique identifier to every version of every data file, similarly to how it works with source code commits in GitHub. Those identifiers usually take one of two forms. A DOI identifier is often assigned to the metadata and becomes the publicly citable identifier for the package. Each of the other files gets a global identifier, often a UUID that is globally unique. In the example above, the package can be cited with the DOI doi:10.5063/F1F18WN4,and each of the individual files have their own identifiers as well.

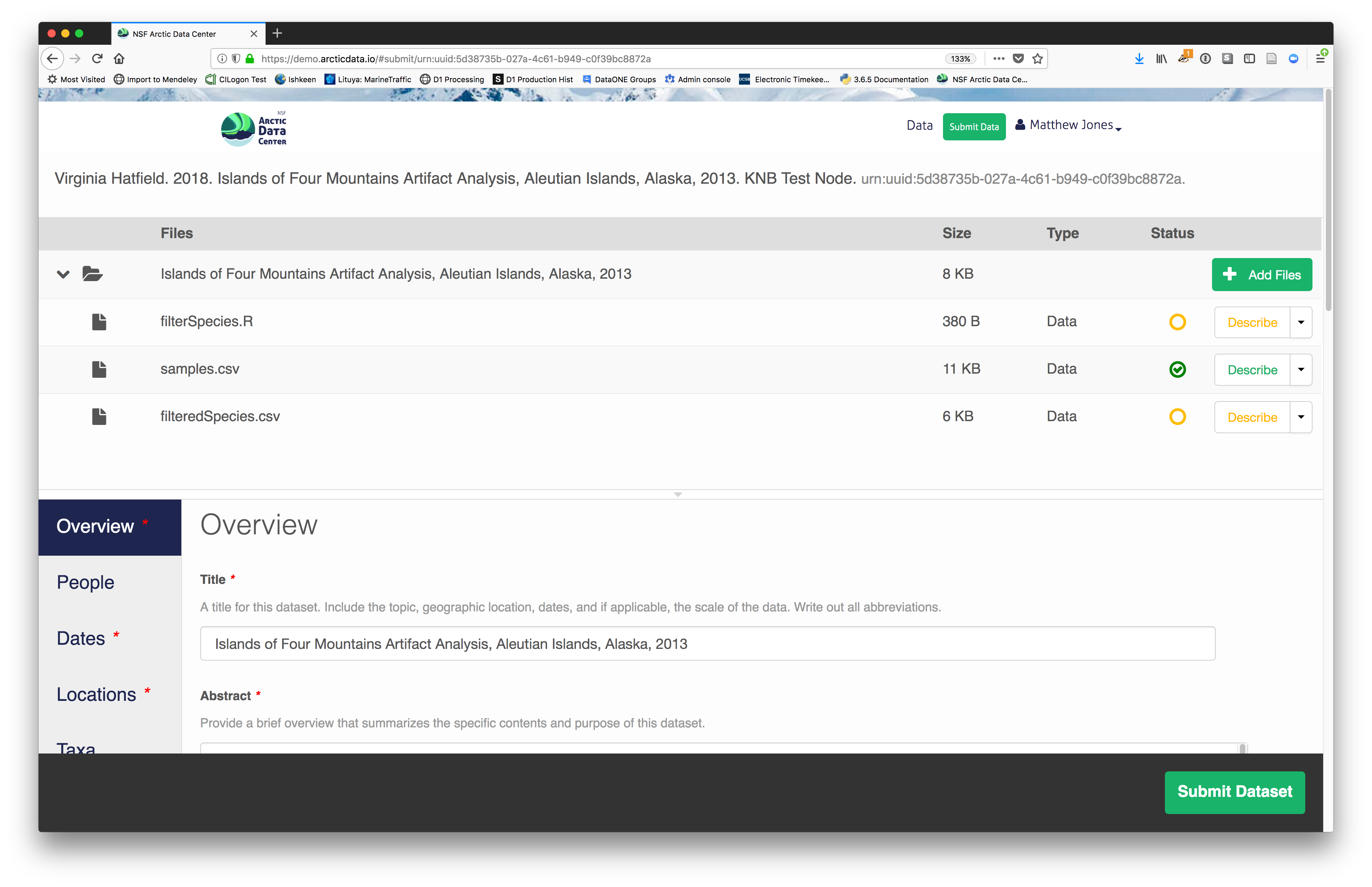

3.5 Publishing data from the web

Each data repository tends to have its own mechanism for submitting data and providing metadata. With repositories like the KNB Data Repository and the Arctic Data Center, we provide some easy to use web forms for editing and submitting a data package. This section provides a brief overview of some highlights within the data submission process, in advance of a more comprehensive hands-on activity.

ORCiDs

We will walk through web submission on https://demo.arcticdata.io, and start by logging in with an ORCID account. ORCID provides a common account for sharing scholarly data, so if you don’t have one, you can create one when you are redirected to ORCID from the Sign In button.

ORCID is a non-profit organization made up of research institutions, funders, publishers and other stakeholders in the research space. ORCID stands for Open Researcher and Contributor ID. The purpose of ORCID is to give researchers a unique identifier which then helps highlight and give credit to researchers for their work. If you click on someone’s ORCID, their work and research contributions will show up (as long as the researcher used ORCID to publish or post their work).

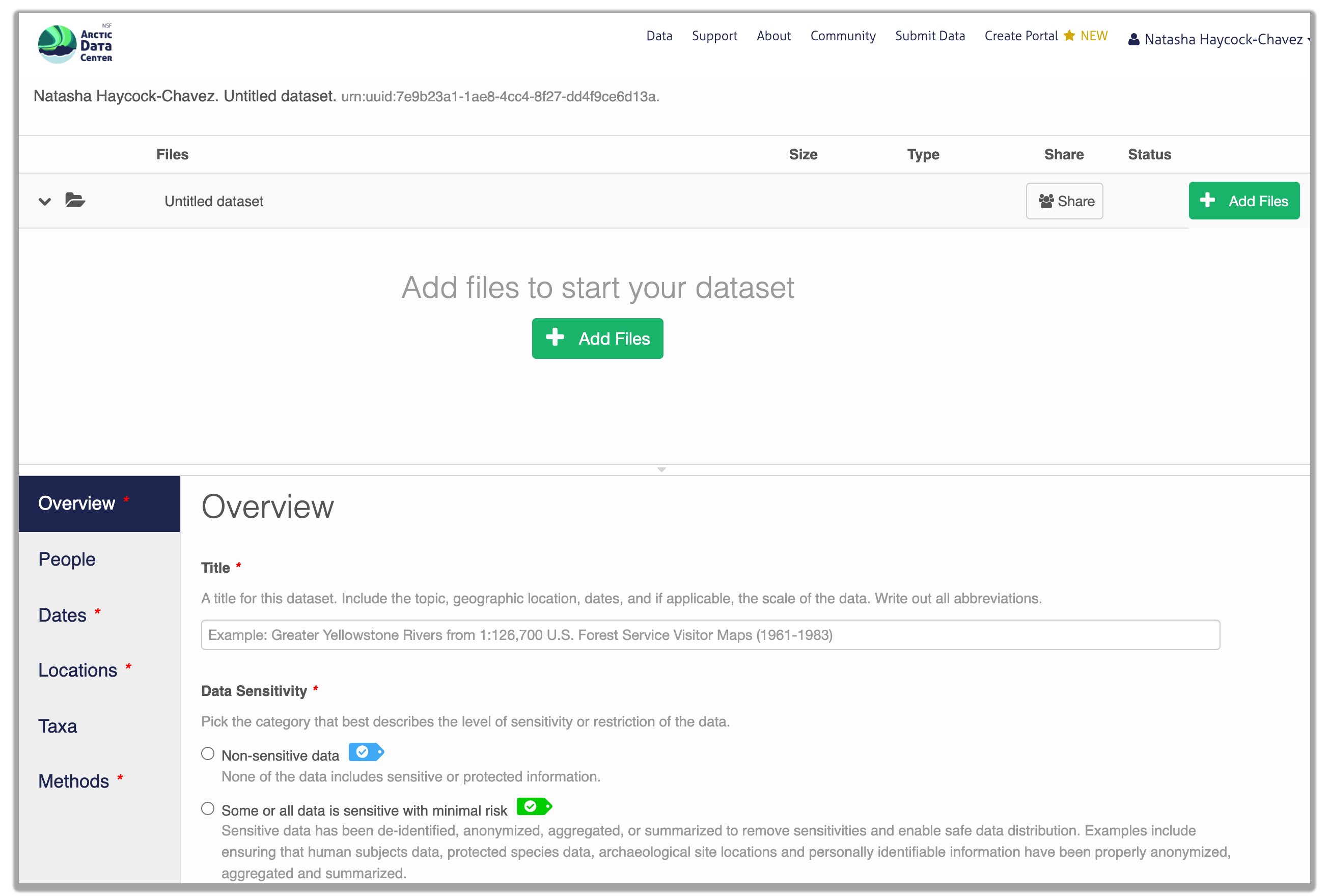

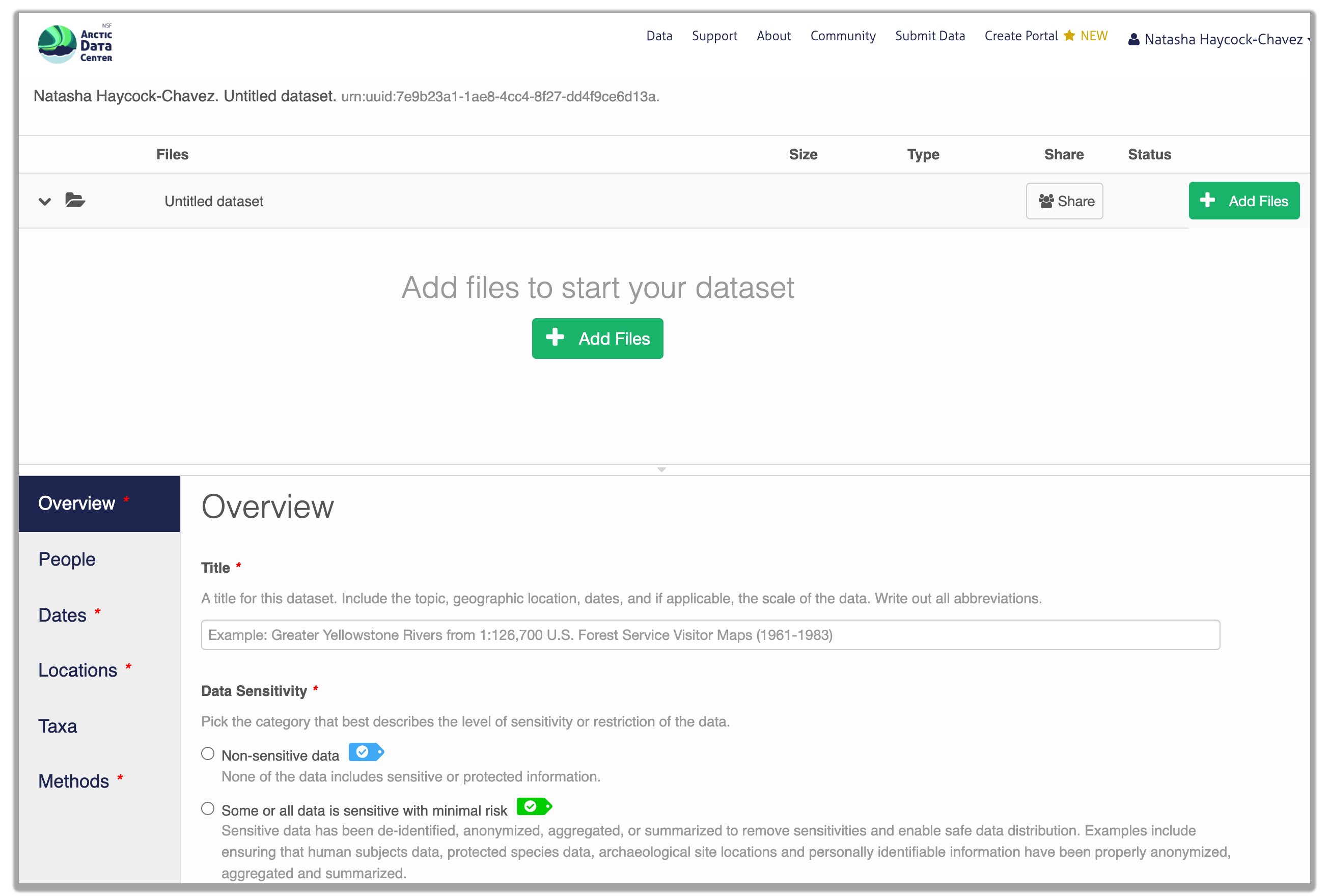

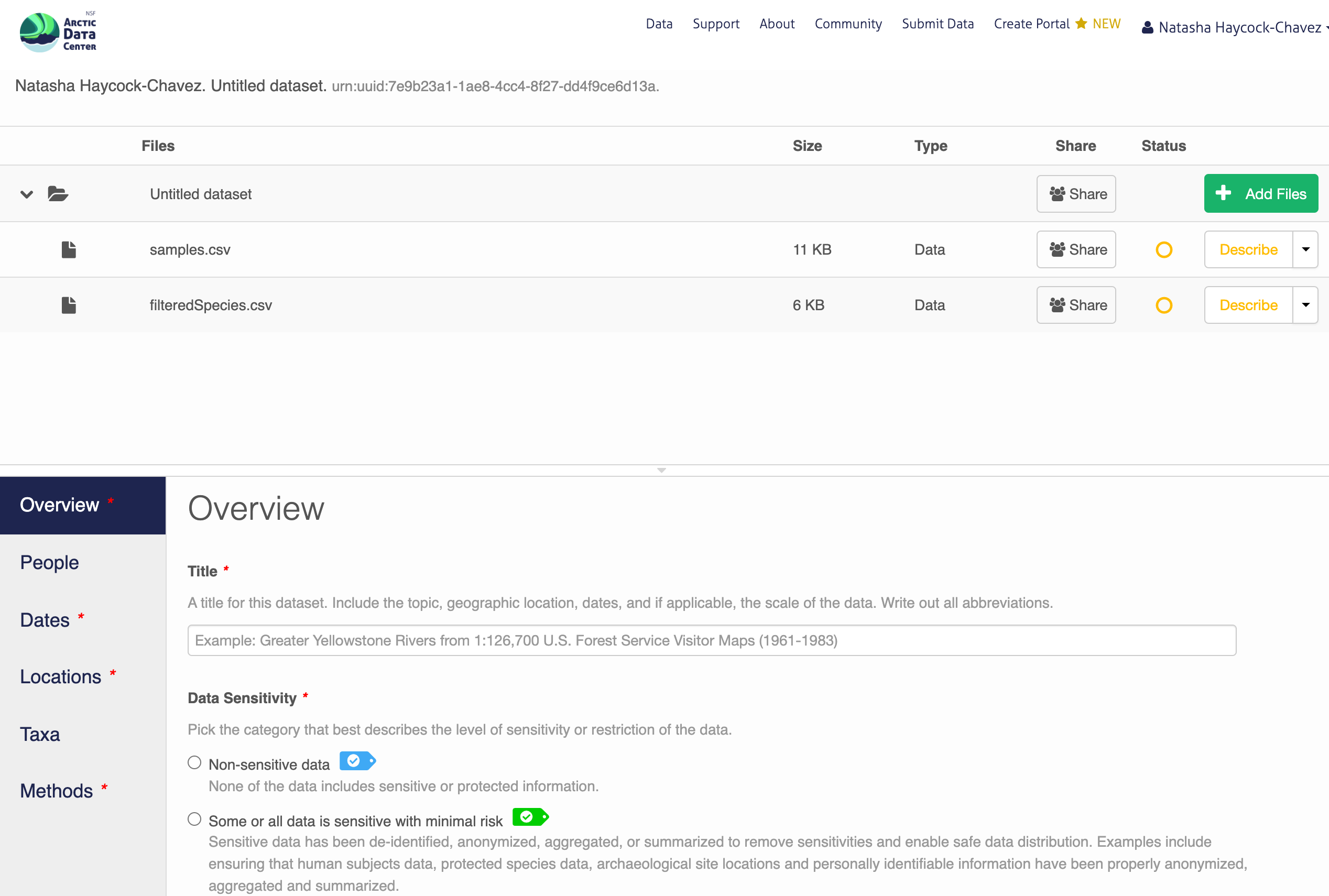

After signing in, you can access the data submission form using the Submit button. Once on the form, upload your data files and follow the prompts to provide the required metadata.

Sensitive Data Handling

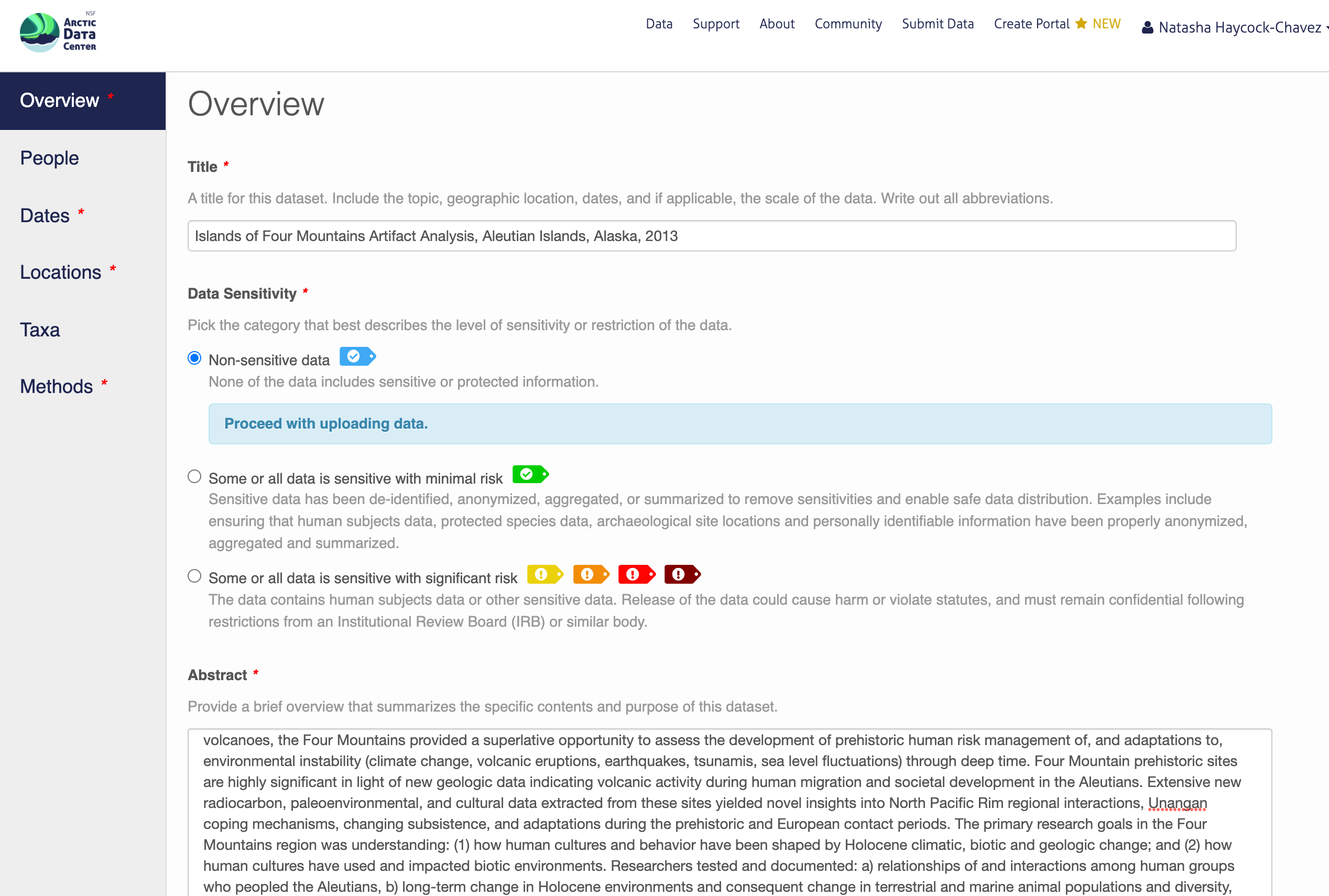

Underneath the Title field, you will see a section titled “Data Sensitivity”. As the primary repository for the NSF Office of Polar Programs Arctic Section, the Arctic Data Center accepts data from all disciplines. This includes data from social science research that may include sensitive data, meaning data that contains personal or identifiable information. Sharing sensitive data can pose challenges to researchers, however sharing metadata or anonymized data contributes to discovery, supports open science principles, and helps reduce duplicate research efforts.

To help mitigate the challenges of sharing sensitive data, the Arctic Data Center has added new features to the data submission process influenced by the CARE Principles for Indigenous Data Governance (Collective benefit, Authority to control, Responsibility, Ethics). Researchers submitting data now have the option to choose between varying levels of sensitivity that best represent their dataset. Data submitters can select one of three sensitivity level data tags that best fit their data and/or metadata. Based on the level of sensitivity, guidelines for submission are provided. The data tags range from non-confidential information to maximally sensitive information.

The purpose of these tags is to ethically contribute to open science by making the richest set of data available for future research. The first tag, “non-sensitive data”, represents data that does not contain potentially harmful information, and can be submitted without further precaution. Data or metadata that is “sensitive with minimal risk” means that either the sensitive data has been anonymized and shared with consent, or that publishing it will not cause any harm. The third option, “some or all data is sensitive with significant risk” represents data that contains potentially harmful or identifiable information, and the data submitter will be asked to hold off submitting the data until further notice. In the case where sharing anonymized sensitive data is not possible due to ethical considerations, sharing anonymized metadata still aligns with FAIR (Findable, Accessible, Interoperable, Reproducible) principles because it increases the visibility of the research which helps reduce duplicate research efforts. Hence, it is important to share metadata, and to publish or share sensitive data only when consent from participants is given, in alignment with the CARE principles and any IRB requirements.

You will continue to be prompted to enter information about your research, and in doing so, create your metadata record. We recommend taking your time because the richer your metadata is, the more easily reproducible and usable your data and research will be for both your future self and other researchers. Detailed instructions are provided below for the hands-on activity.

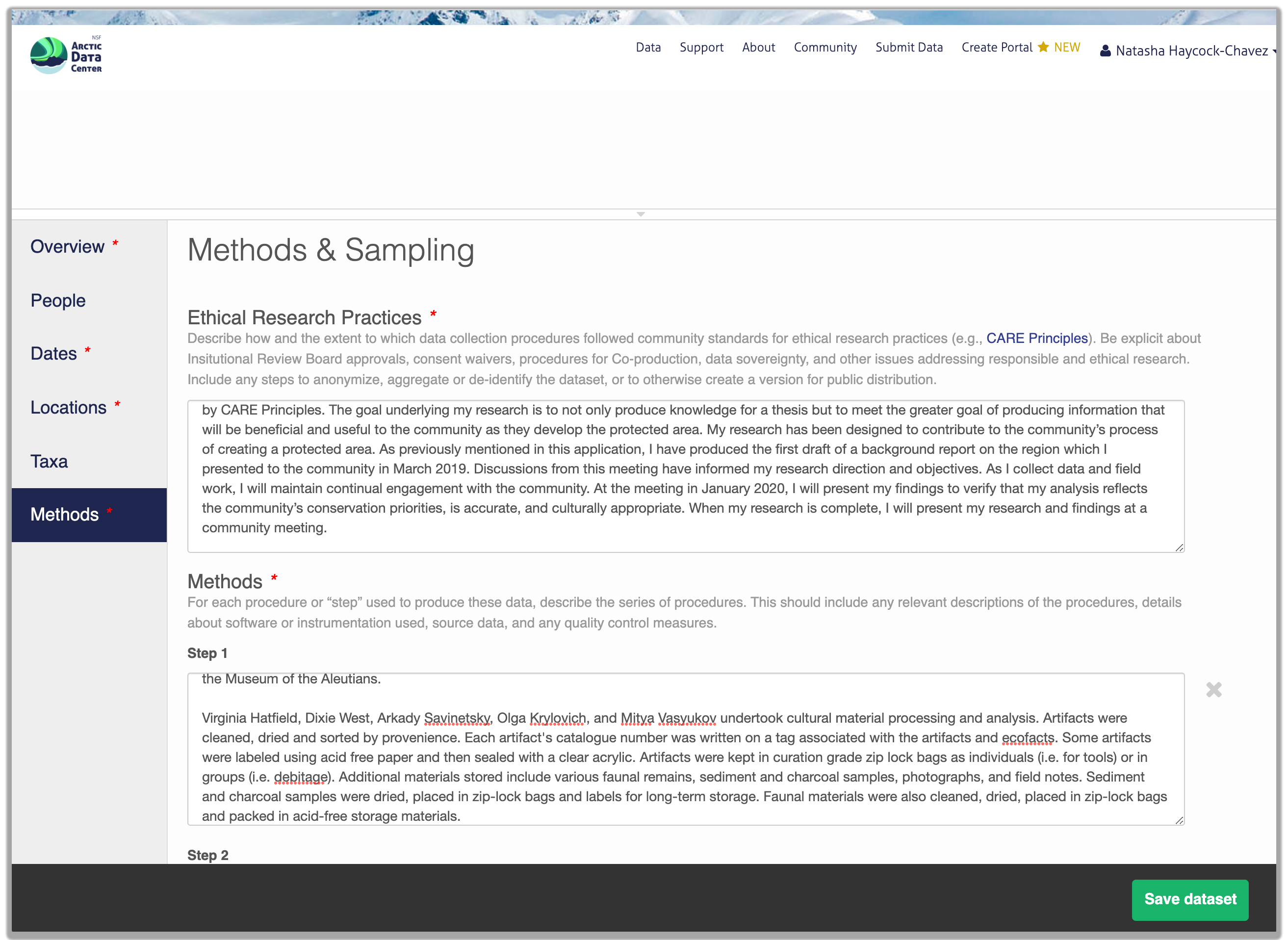

Research Methods

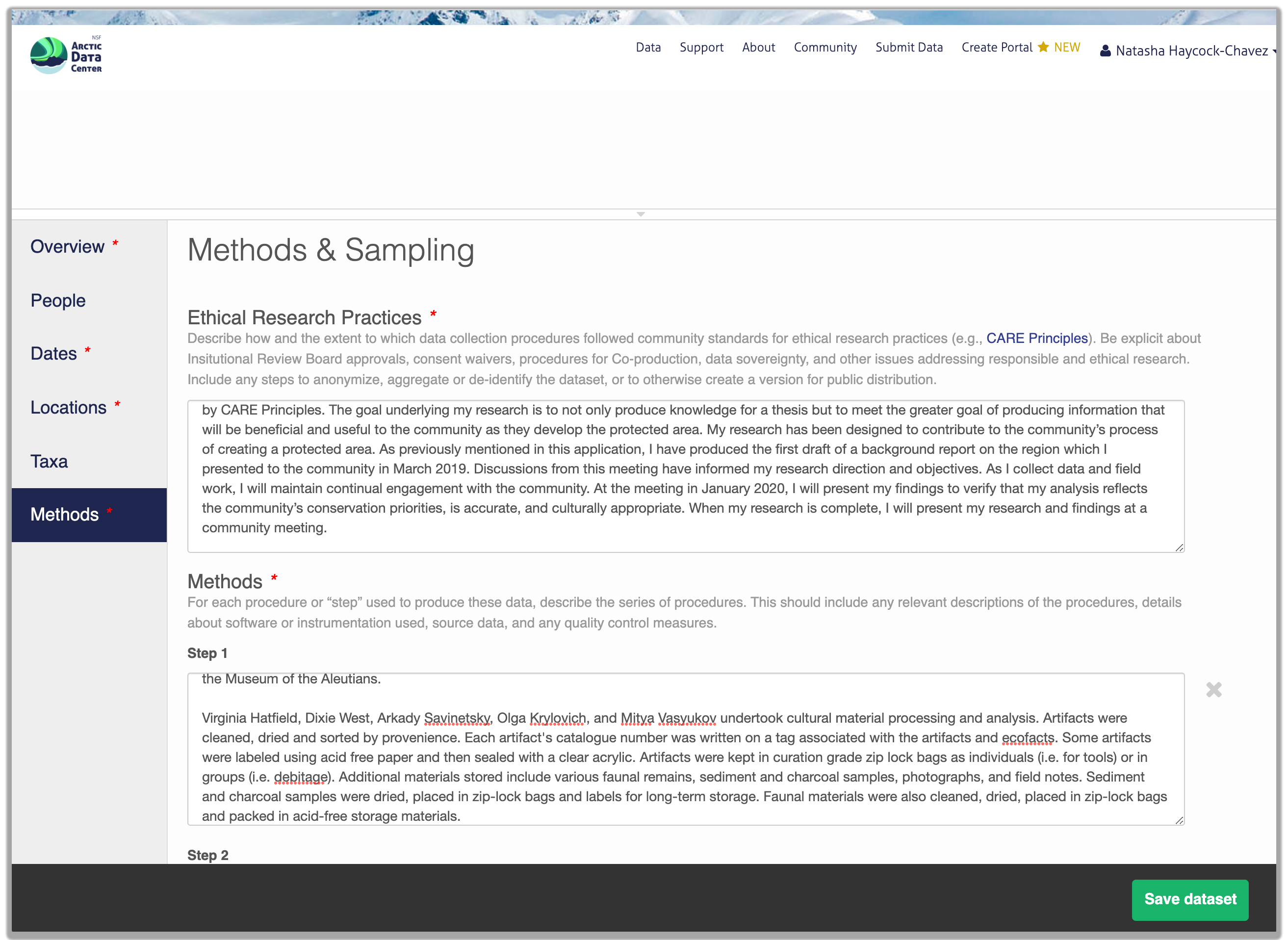

Methods are critical to accurate interpretation and reuse of your data. The editor allows you to add multiple different methods sections, so that you can include details of sampling methods, experimental design, quality assurance procedures, and/or computational techniques and software.

As part of a recent update, researchers are now asked to describe the ethical data practices used throughout their research. The information provided will be visible as part of the metadata record. This feature was added to the data submission process to encourage transparency in data ethics. Transparency in data ethics is a vital part of open science and sharing ethical practices encourages deeper discussion about data reuse and ethics.

We encourage you to think about the ethical data and research practices that were utilized during your research, even if they don’t seem obvious at first.

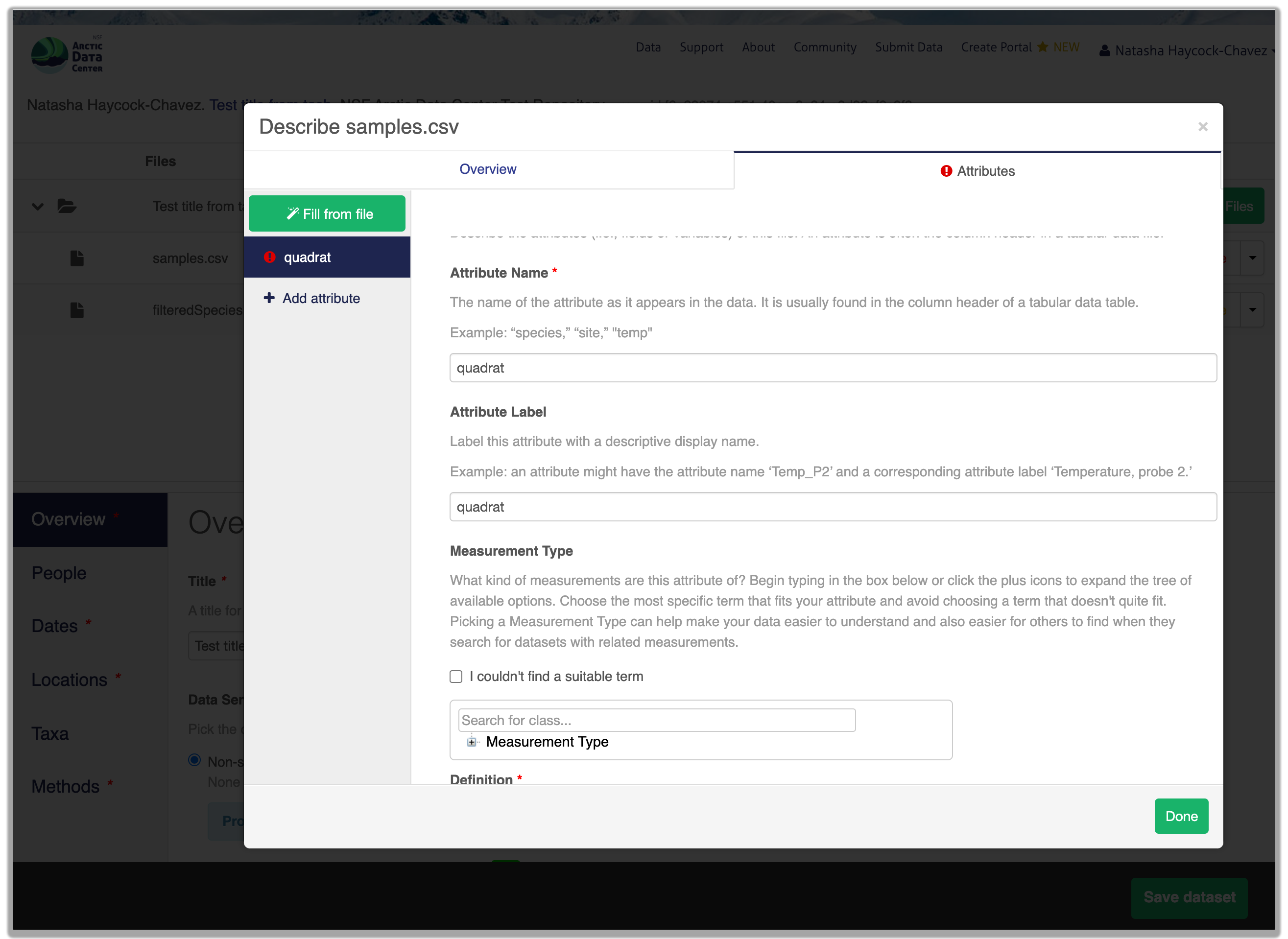

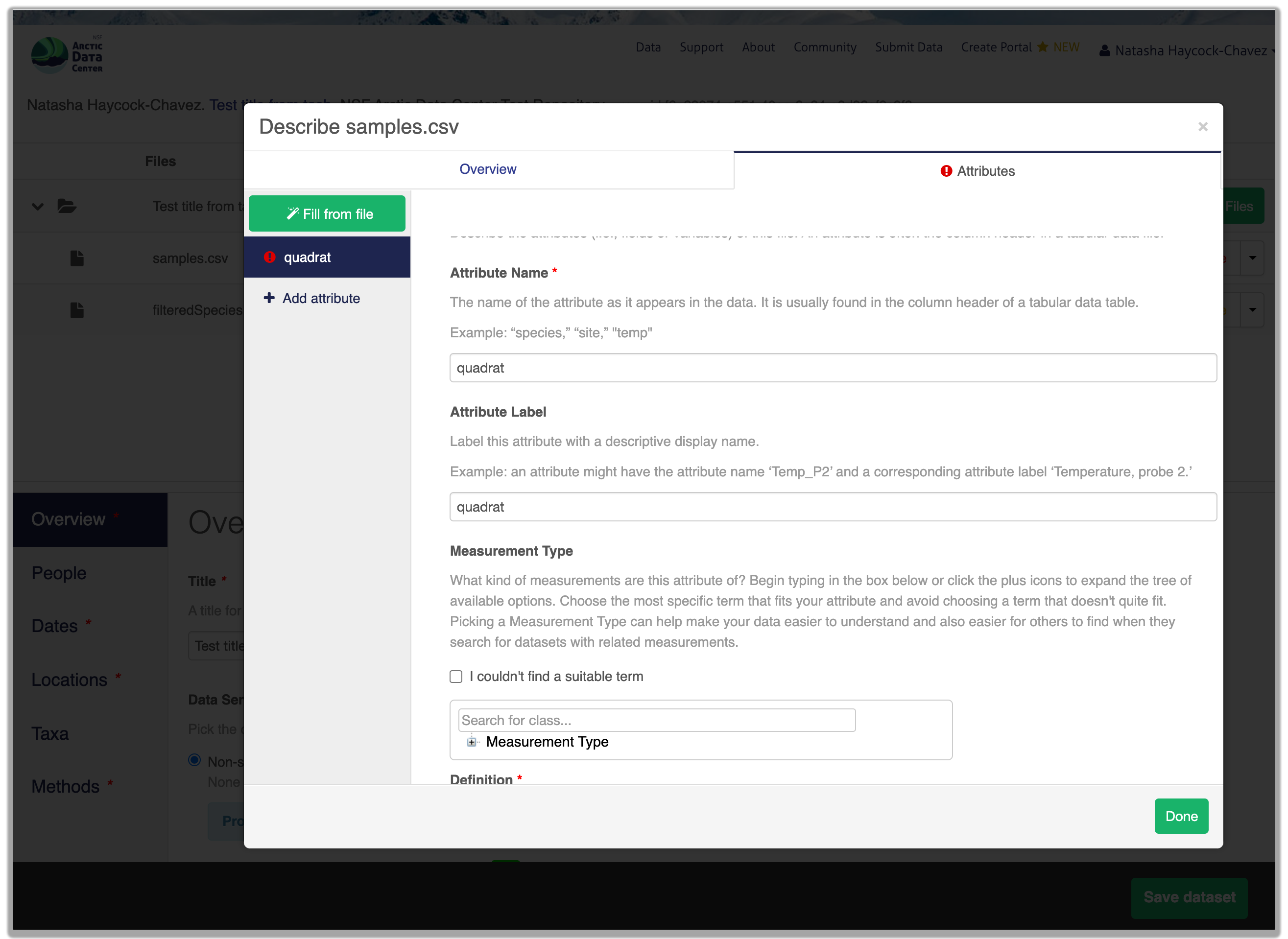

File and Variable Level Metadata

In addition to providing information about, (or a description of) your dataset, you can also provide information about each file and the variables within the file. By clicking the “Describe” button you can add comprehensive information about each of your measurements, such as the name, measurement type, standard units etc.

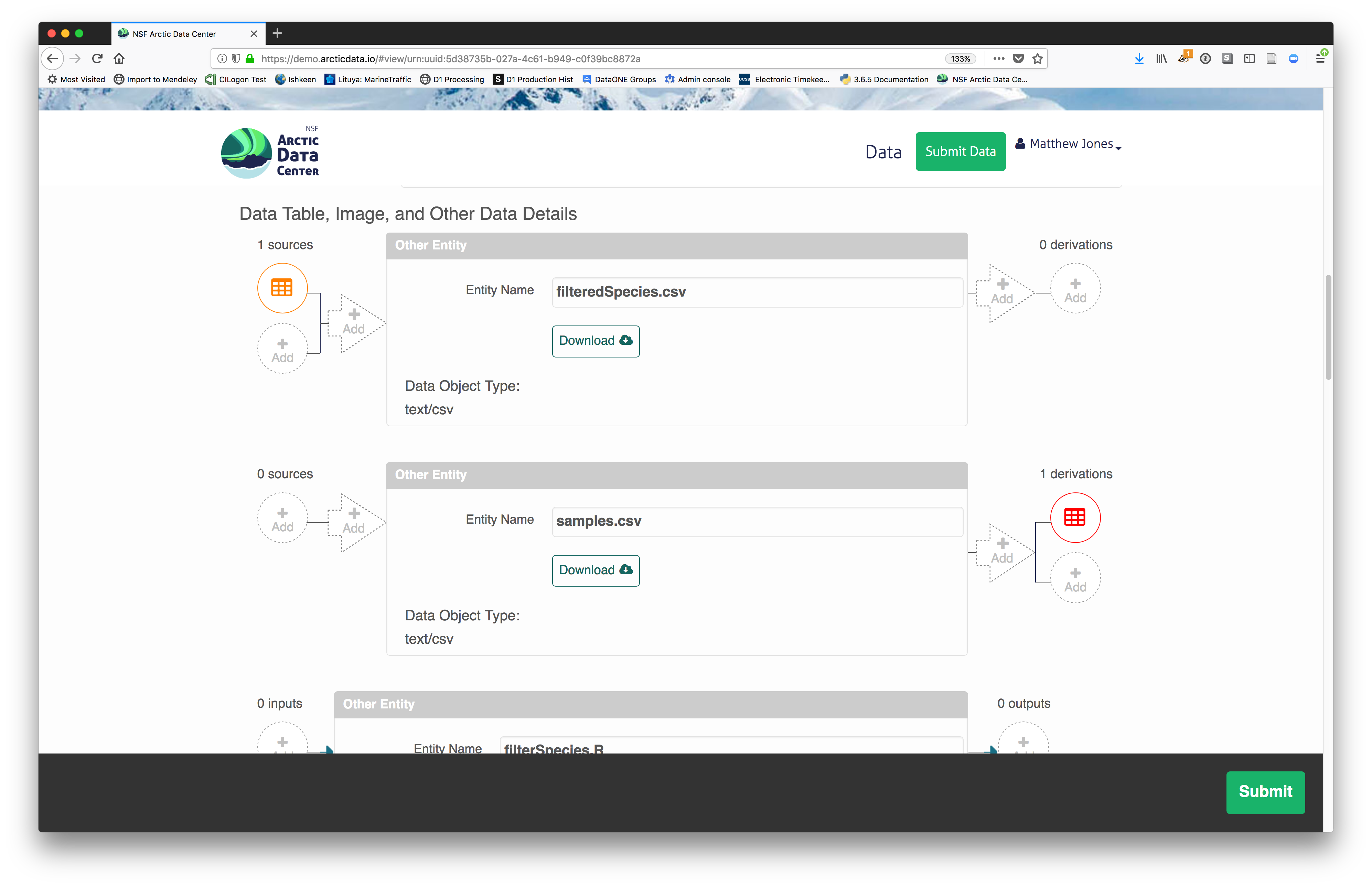

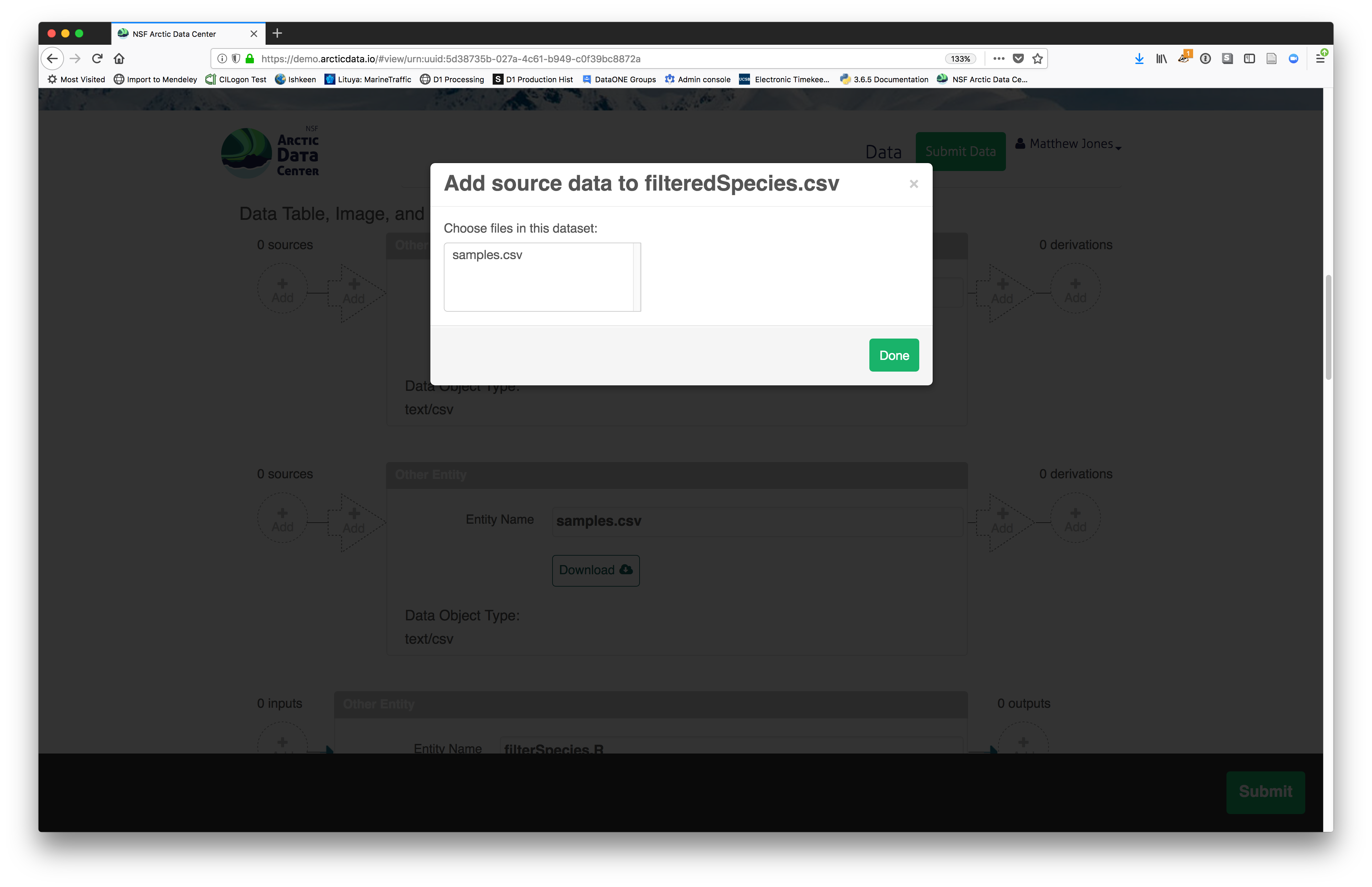

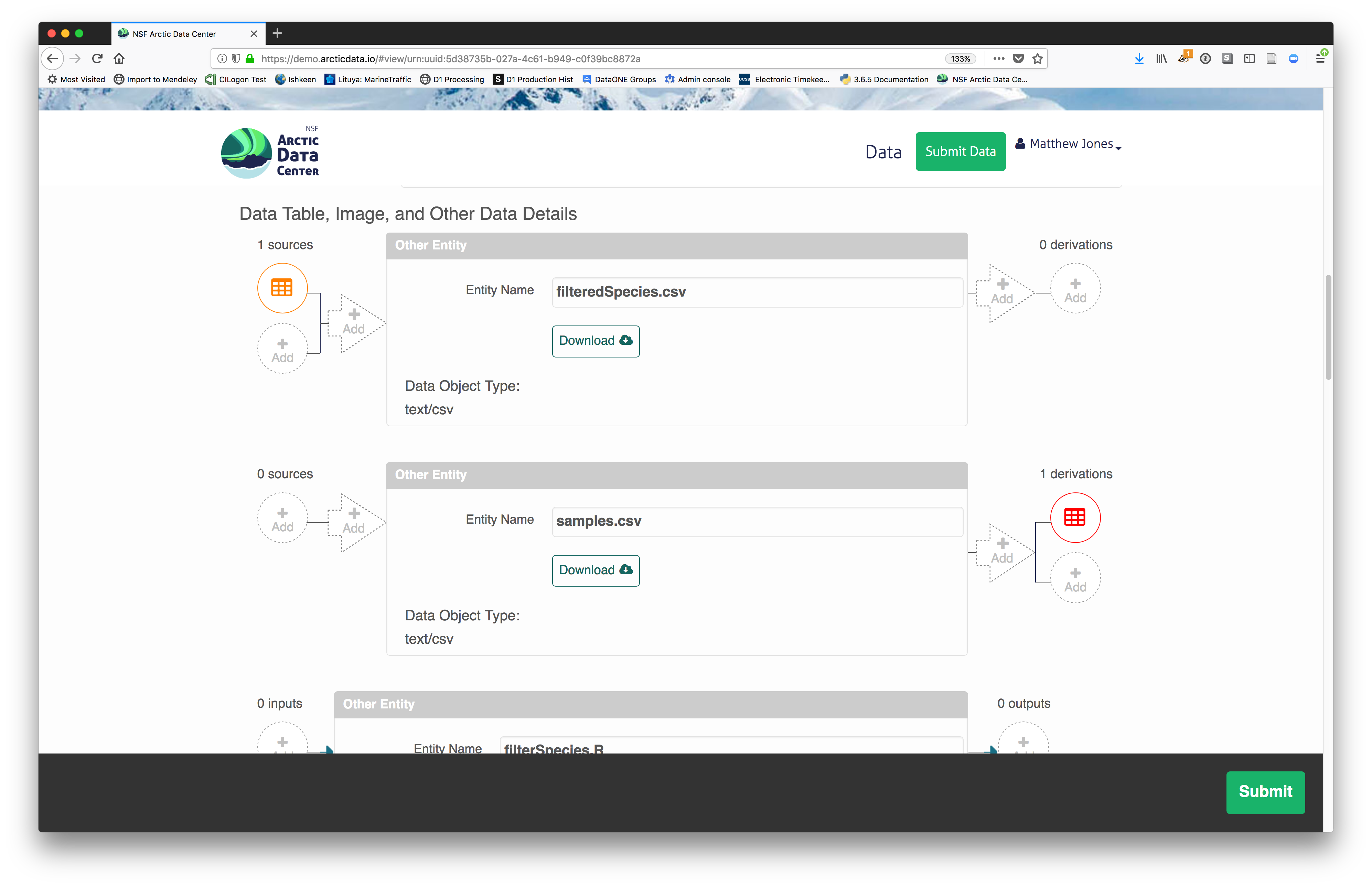

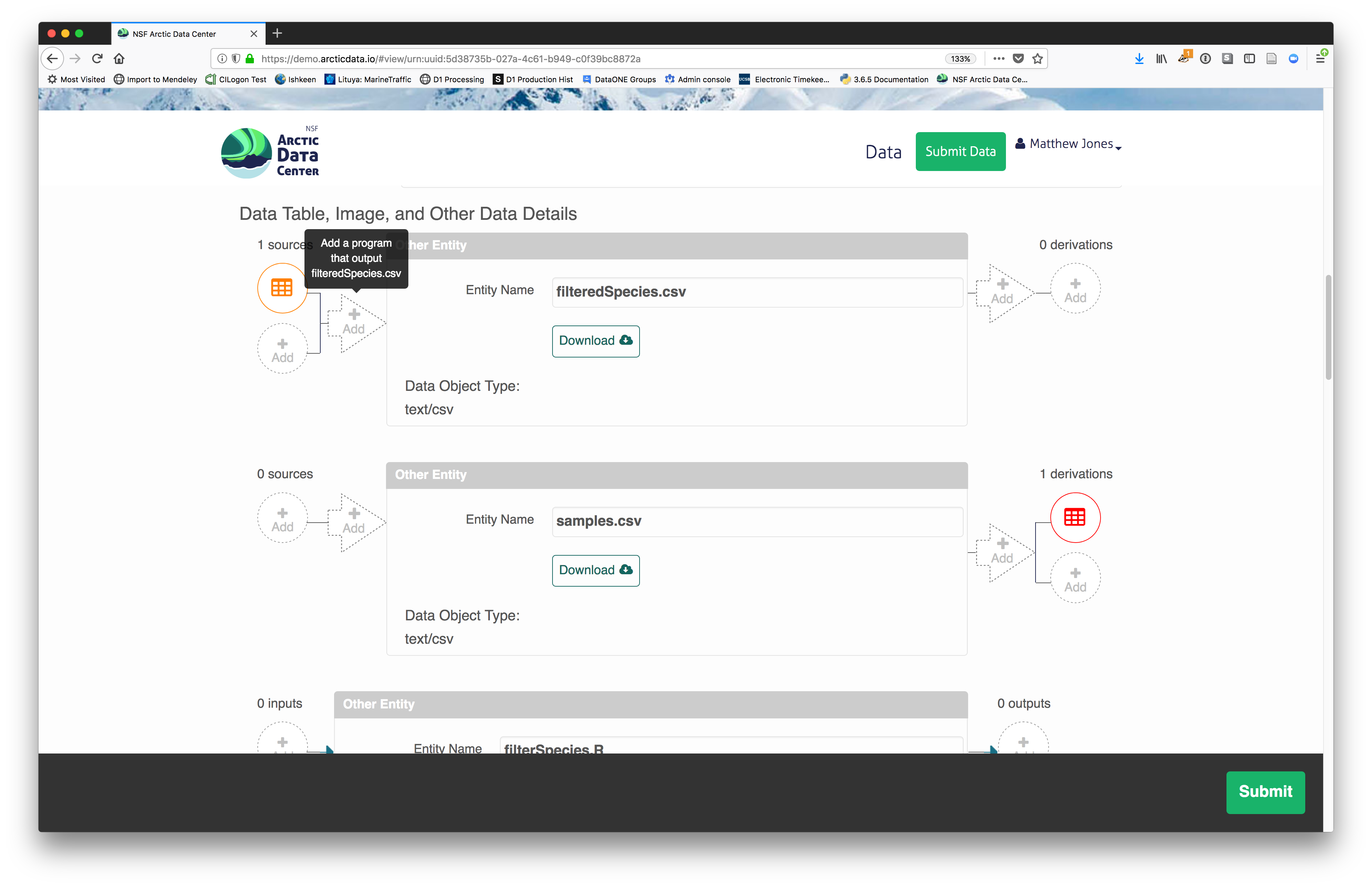

Provenance

The data submission system also provides the opportunity for you to provide provenance information, describe the relationship between package elements. When viewing your dataset followinng submission, After completing your data description and submitting your dataset you will see the option to add source data and code, and derived data and code.

These are just some of the features and functionality of the Arctic Data Center submission system and we will go through them in more detail below as part of a hands-on activity.

3.5.1 Download the data to be used for the tutorial

I’ve already uploaded the test data package, and so you can access the data here:

- https://demo.arcticdata.io/#view/urn:uuid:0702cc63-4483-4af4-a218-531ccc59069f

Grab both CSV files, and the R script, and store them in a convenient folder.

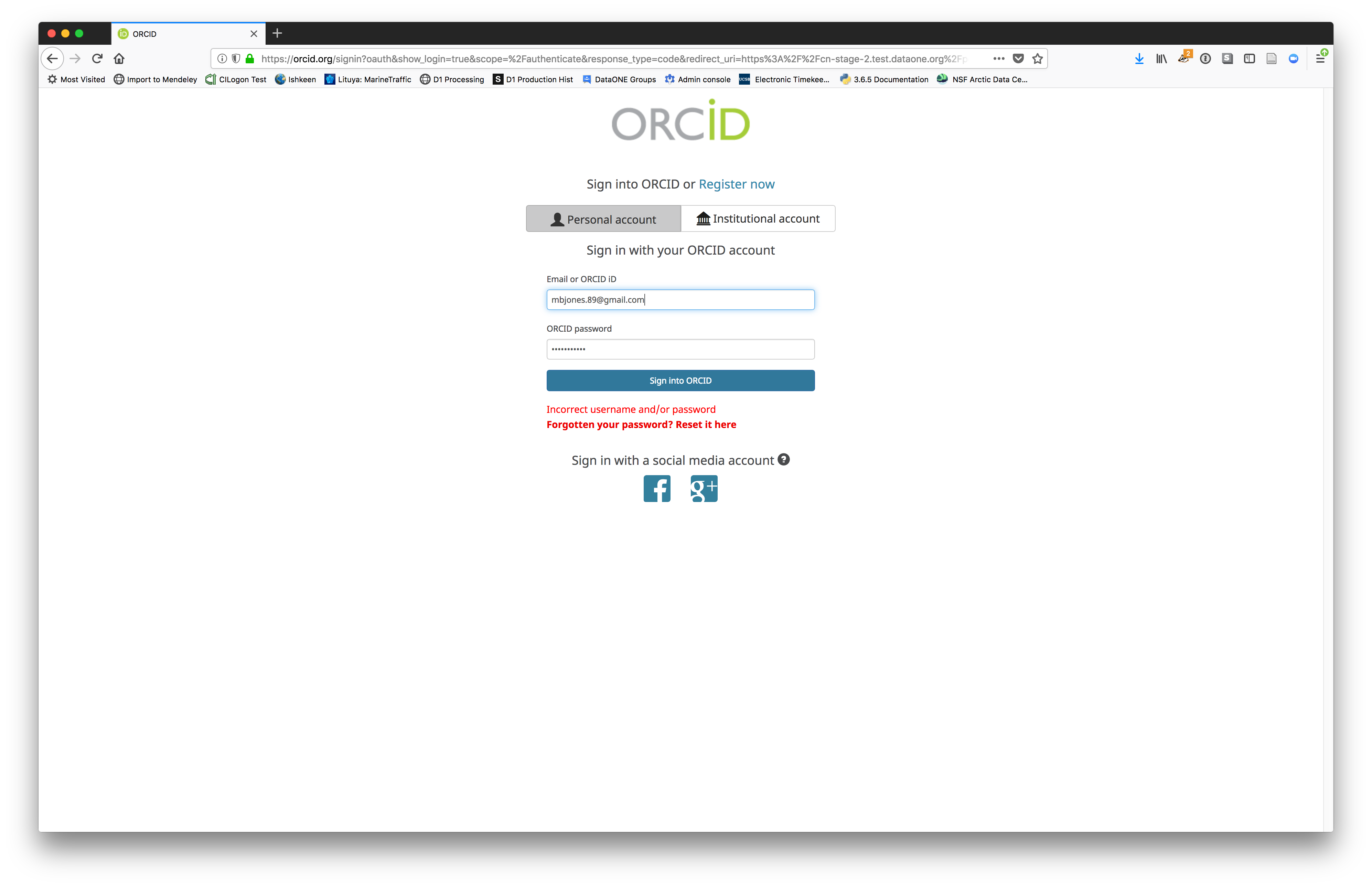

3.5.2 Login via ORCID

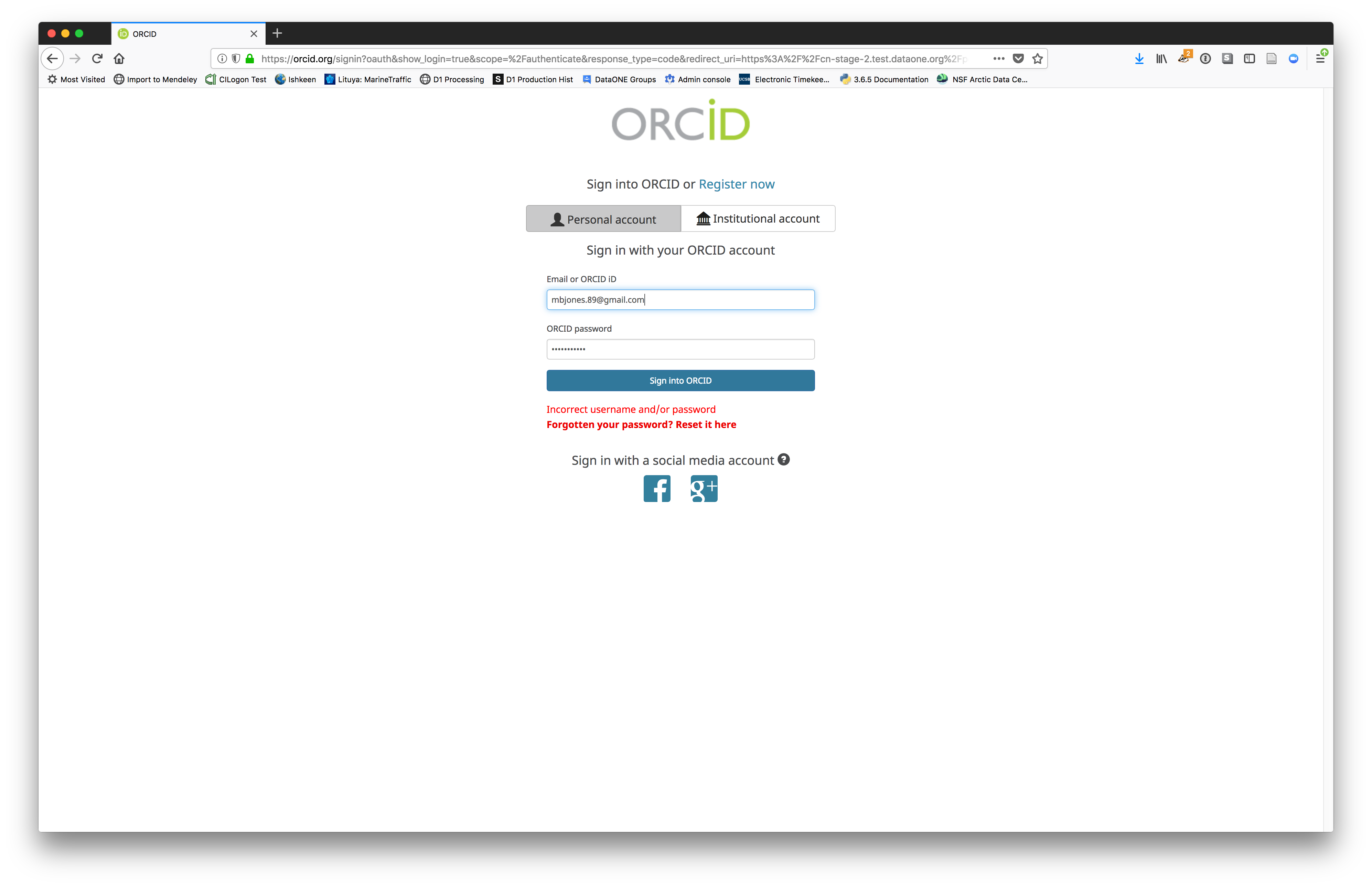

We will walk through web submission on https://demo.arcticdata.io, and start by logging in with an ORCID account. ORCID provides a common account for sharing scholarly data, so if you don’t have one, you can create one when you are redirected to ORCID from the Sign In button.

When you sign in, you will be redirected to orcid.org, where you can either provide your existing ORCID credentials or create a new account. ORCID provides multiple ways to login, including using your email address, an institutional login from many universities, and/or a login from social media account providers. Choose the one that is best suited to your use as a scholarly record, such as your university or agency login.

When you sign in, you will be redirected to orcid.org, where you can either provide your existing ORCID credentials or create a new account. ORCID provides multiple ways to login, including using your email address, an institutional login from many universities, and/or a login from social media account providers. Choose the one that is best suited to your use as a scholarly record, such as your university or agency login.

3.5.3 Create and submit the dataset

After signing in, you can access the data submission form using the Submit button. Once on the form, upload your data files and follow the prompts to provide the required metadata.

3.5.3.1 Click Add Files to choose the data files for your package

You can select multiple files at a time to efficiently upload many files.

The files will upload showing a progress indicator. You can continue editing metadata while they upload.

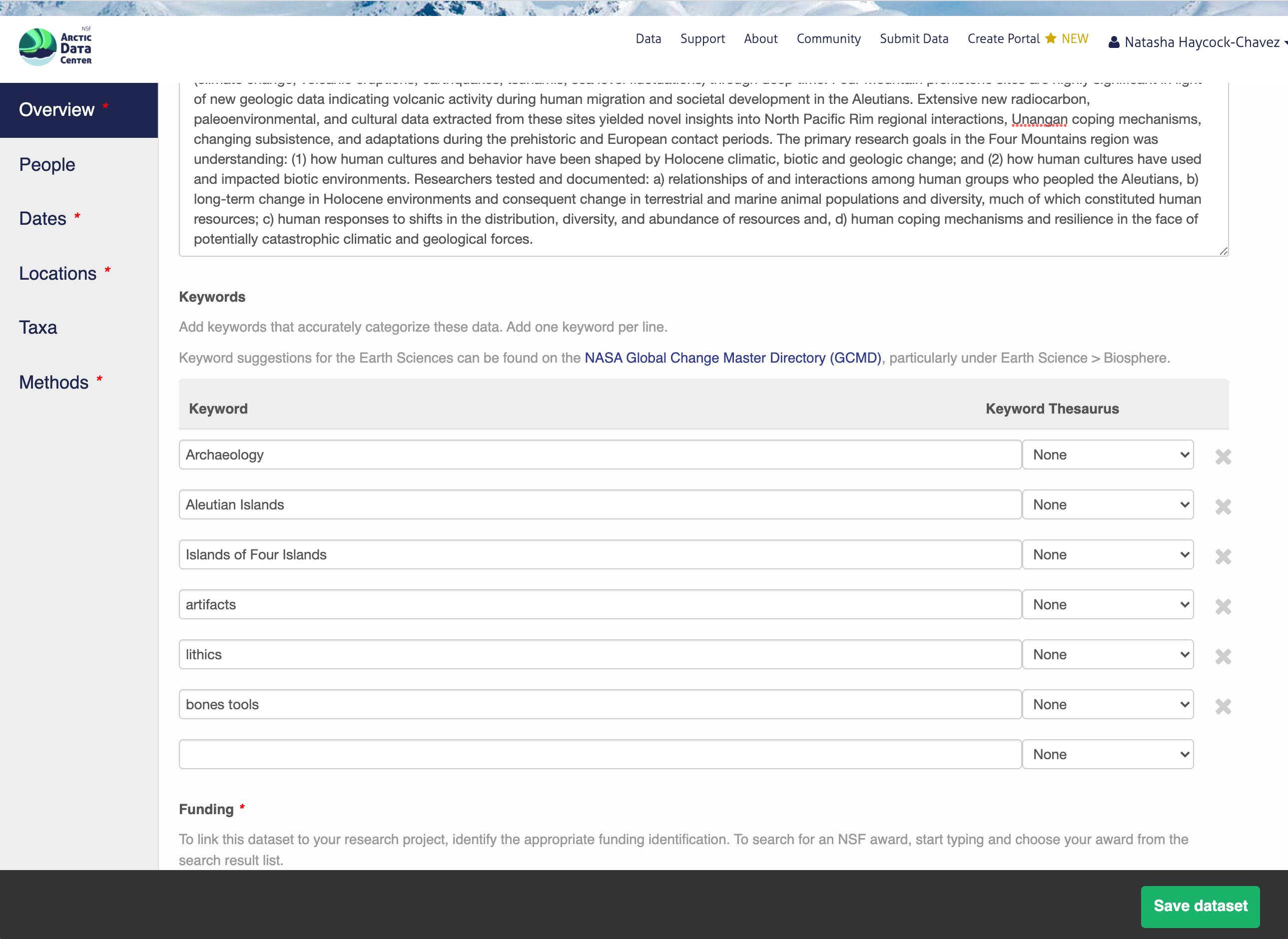

3.5.3.2 Enter Overview information

This includes a descriptive title, abstract, and keywords.

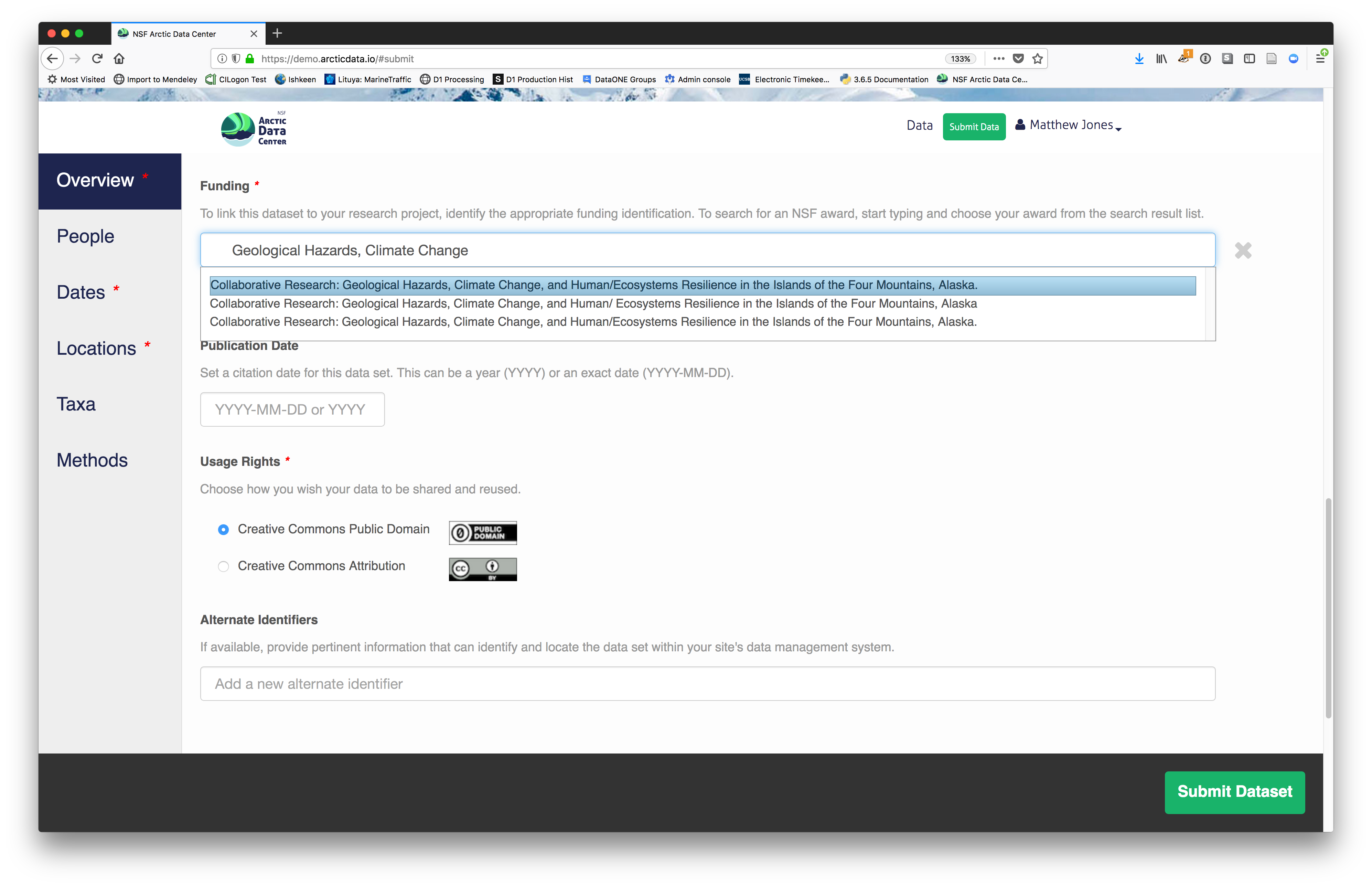

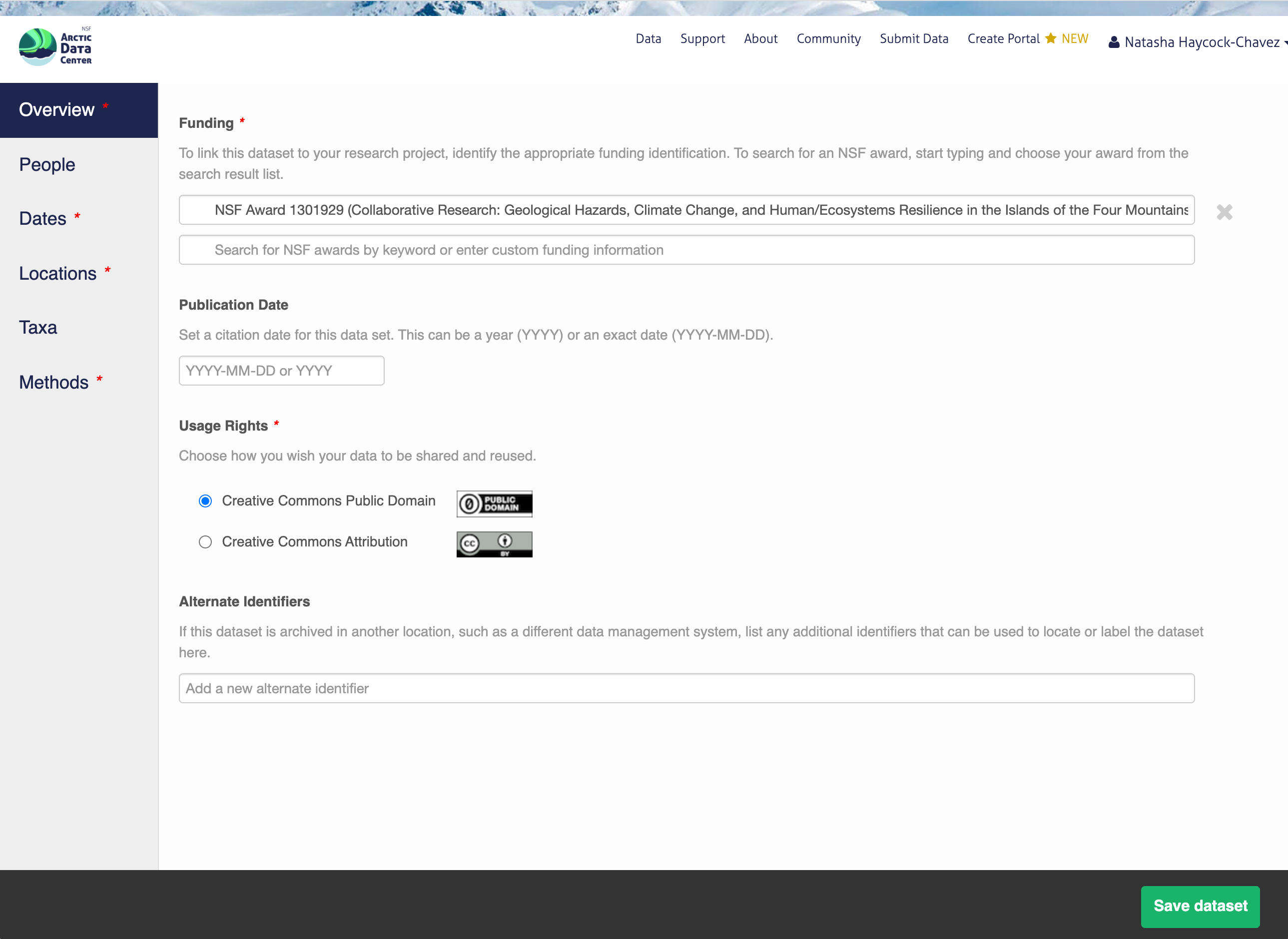

You also must enter a funding award number and choose a license. The funding field will search for an NSF award identifier based on words in its title or the number itself. The licensing options are CC-0 and CC-BY, which both allow your data to be downloaded and re-used by other researchers.

You also must enter a funding award number and choose a license. The funding field will search for an NSF award identifier based on words in its title or the number itself. The licensing options are CC-0 and CC-BY, which both allow your data to be downloaded and re-used by other researchers.

- CC-0 Public Domain Dedication: “…can copy, modify, distribute and perform the work, even for commercial purposes, all without asking permission.”

- CC-BY: Attribution 4.0 International License: “…free to…copy,…redistribute,…remix, transform, and build upon the material for any purpose, even commercially,…[but] must give appropriate credit, provide a link to the license, and indicate if changes were made.”

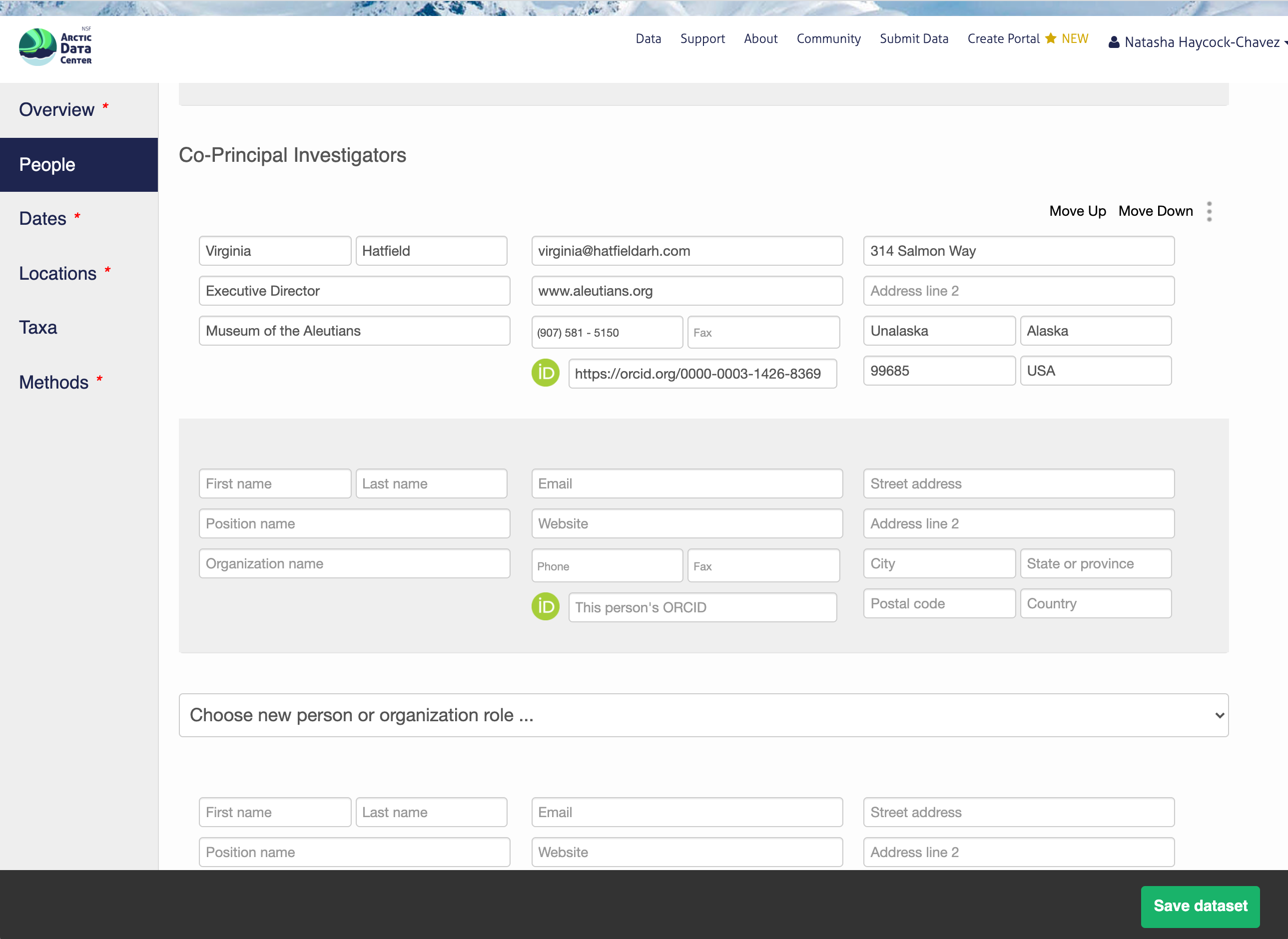

3.5.3.3 People Information

Information about the people associated with the dataset is essential to provide credit through citation and to help people understand who made contributions to the product. Enter information for the following people:

- Creators - all the people who should be in the citation for the dataset

- Contacts - one is required, but defaults to the first Creator if omitted

- Principal Investigators

- Any others that are relevant

For each, please provide their ORCID identifier, which helps link this dataset to their other scholarly works.

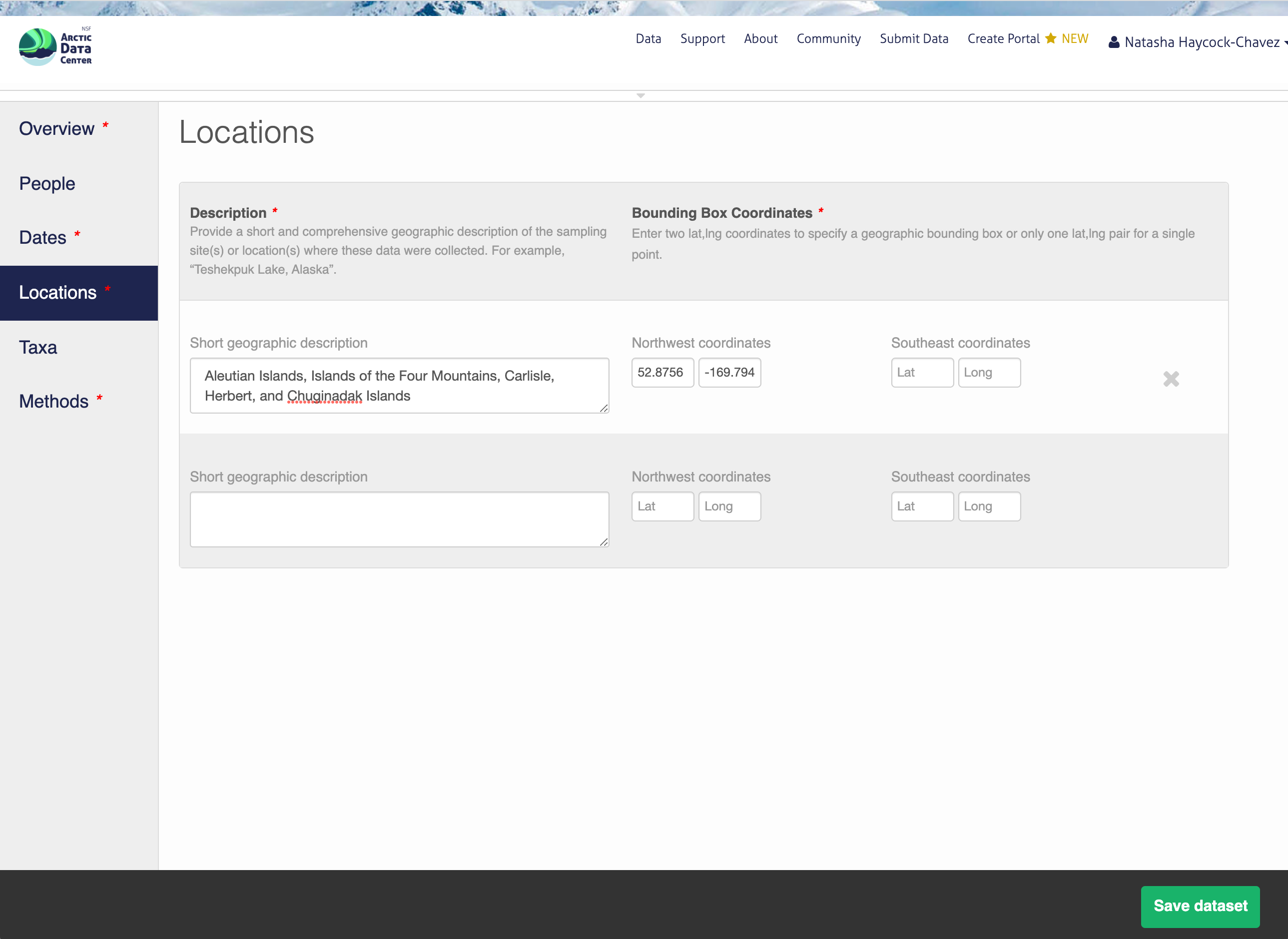

3.5.3.4 Location Information

The geospatial location that the data were collected is critical for discovery and interpretation of the data. Coordinates are entered in decimal degrees, and be sure to use negative values for West longitudes. The editor allows you to enter multiple locations, which you should do if you had noncontiguous sampling locations. This is particularly important if your sites are separated by large distances, so that spatial search will be more precise.

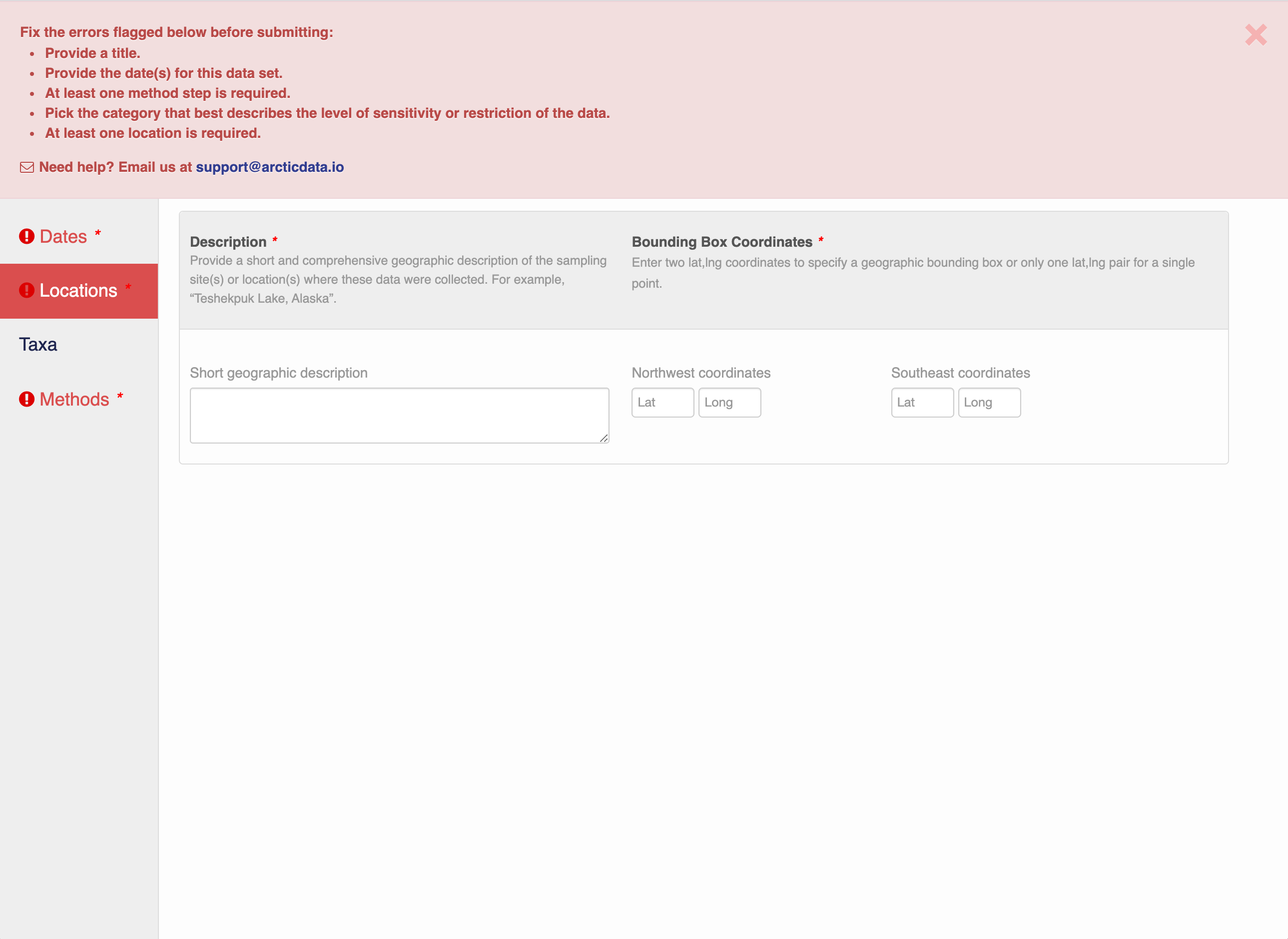

Note that, if you miss fields that are required, they will be highlighted in red to draw your attention. In this case, for the description, provide a comma-separated place name, ordered from the local to global:

- Mission Canyon, Santa Barbara, California, USA

3.5.3.5 Temporal Information

Add the temporal coverage of the data, which represents the time period to which data apply. Again, use multiple date ranges if your sampling was discontinuous.

3.5.3.6 Methods

Methods are critical to accurate interpretation and reuse of your data. The editor allows you to add multiple different methods sections, so that you can include details of sampling methods, experimental design, quality assurance procedures, and/or computational techniques and software. Please be complete with your methods sections, as they are fundamentally important to reuse of the data.

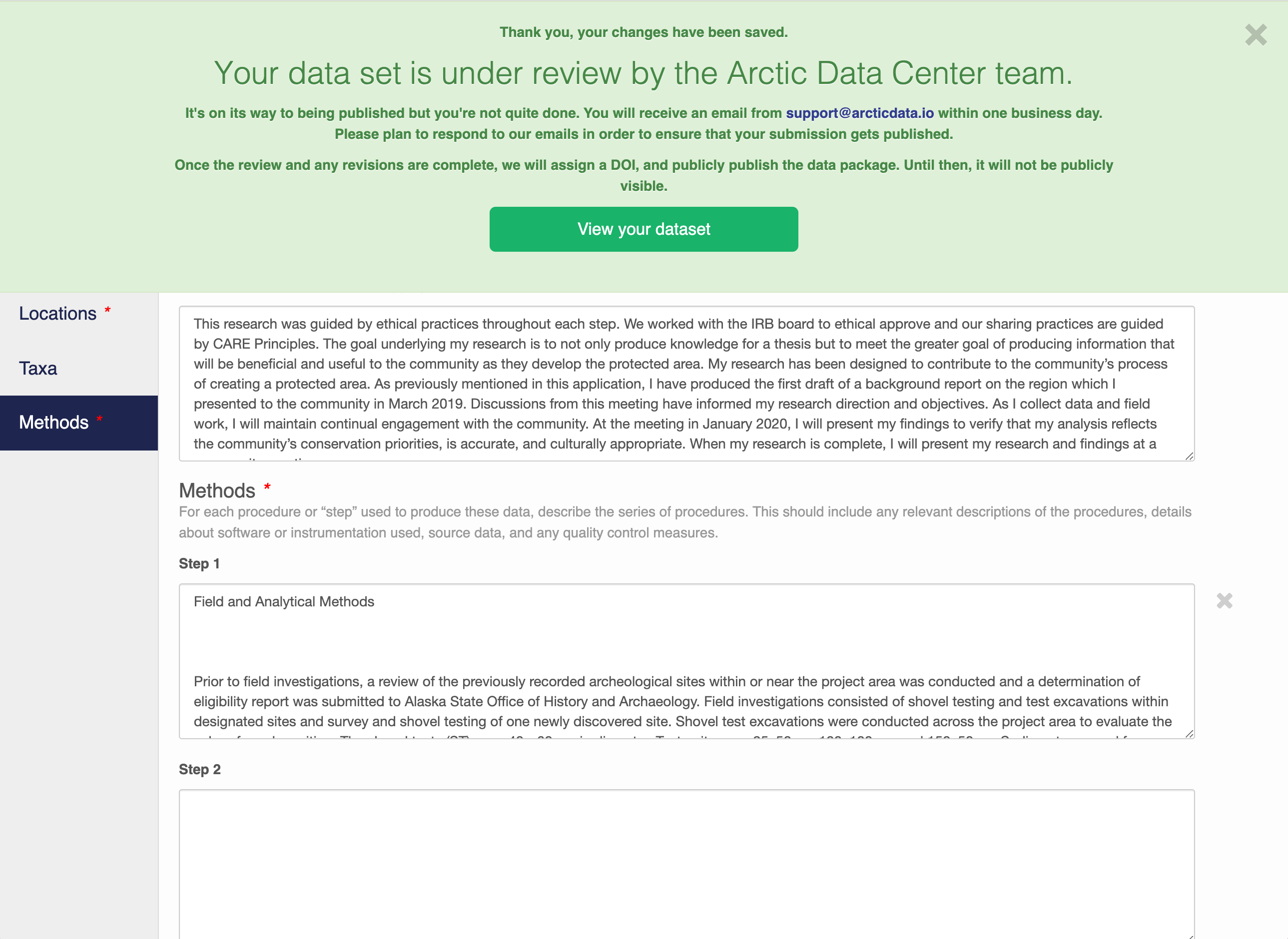

3.5.3.7 Save a first version with Submit

When finished, click the Submit Dataset button at the bottom.

If there are errors or missing fields, they will be highlighted.

Correct those, and then try submitting again. When you are successful, you should see a large green banner with a link to the current dataset view. Click the X to close that banner if you want to continue editing metadata.

Success!

Success!

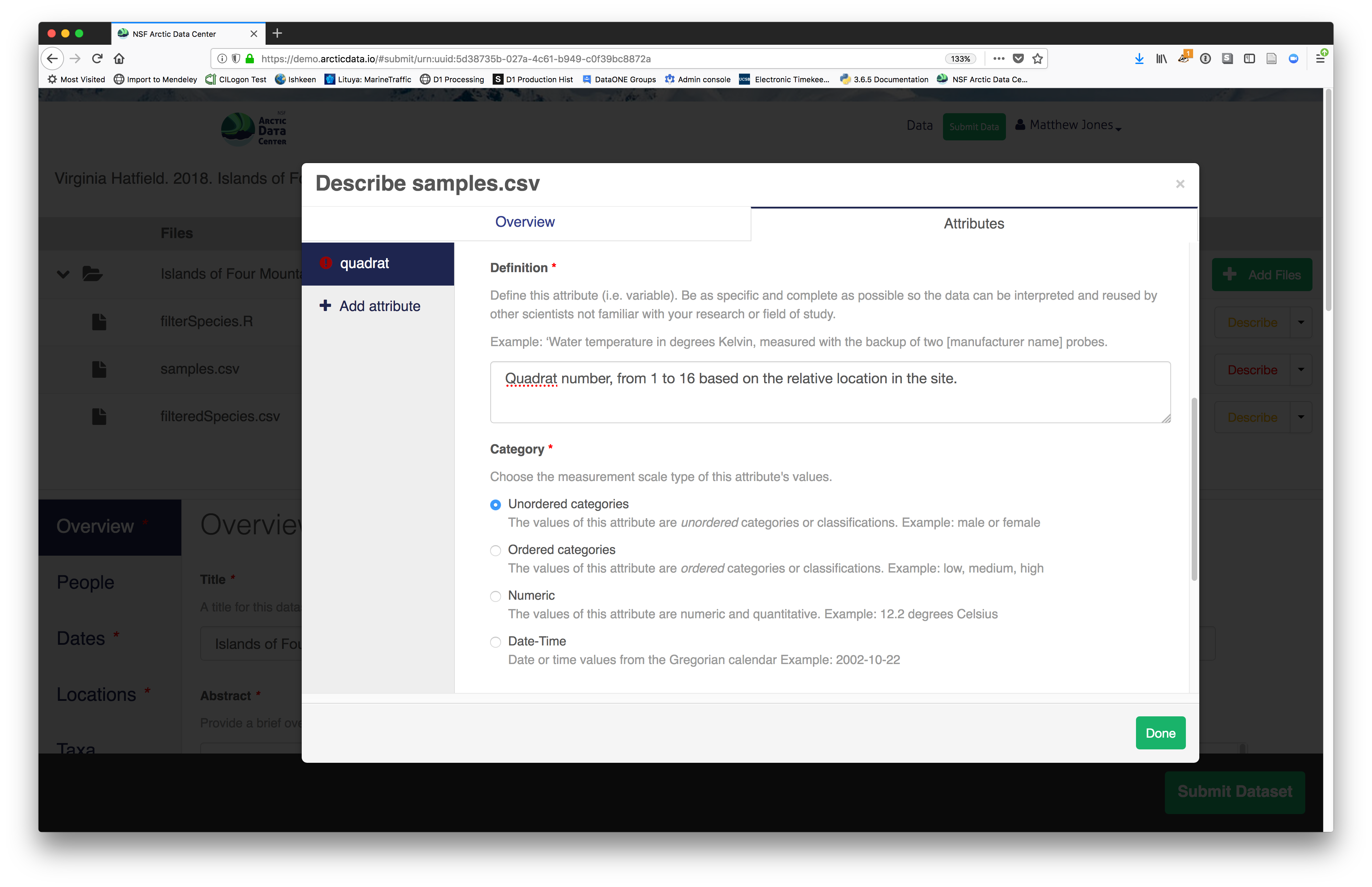

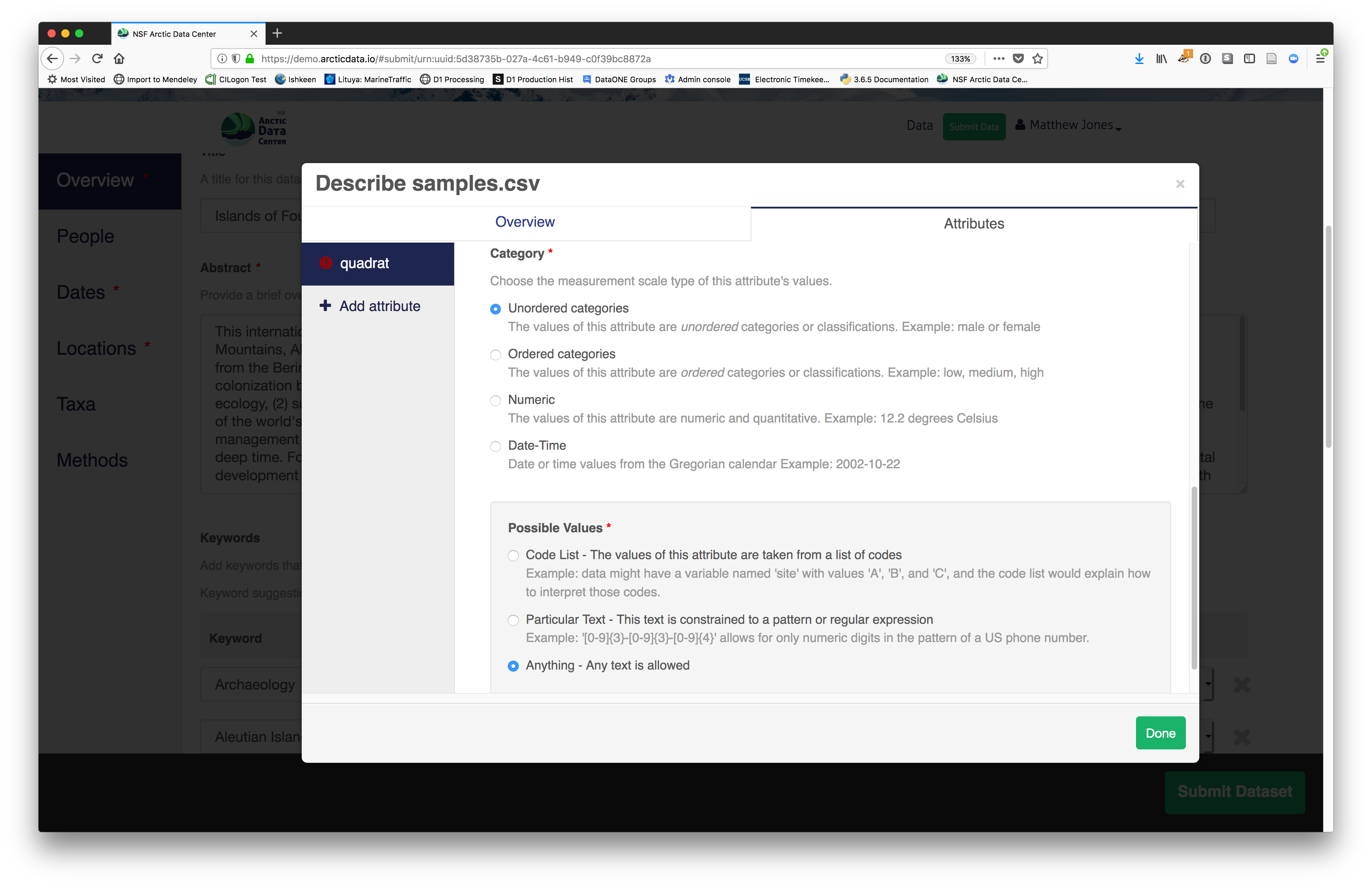

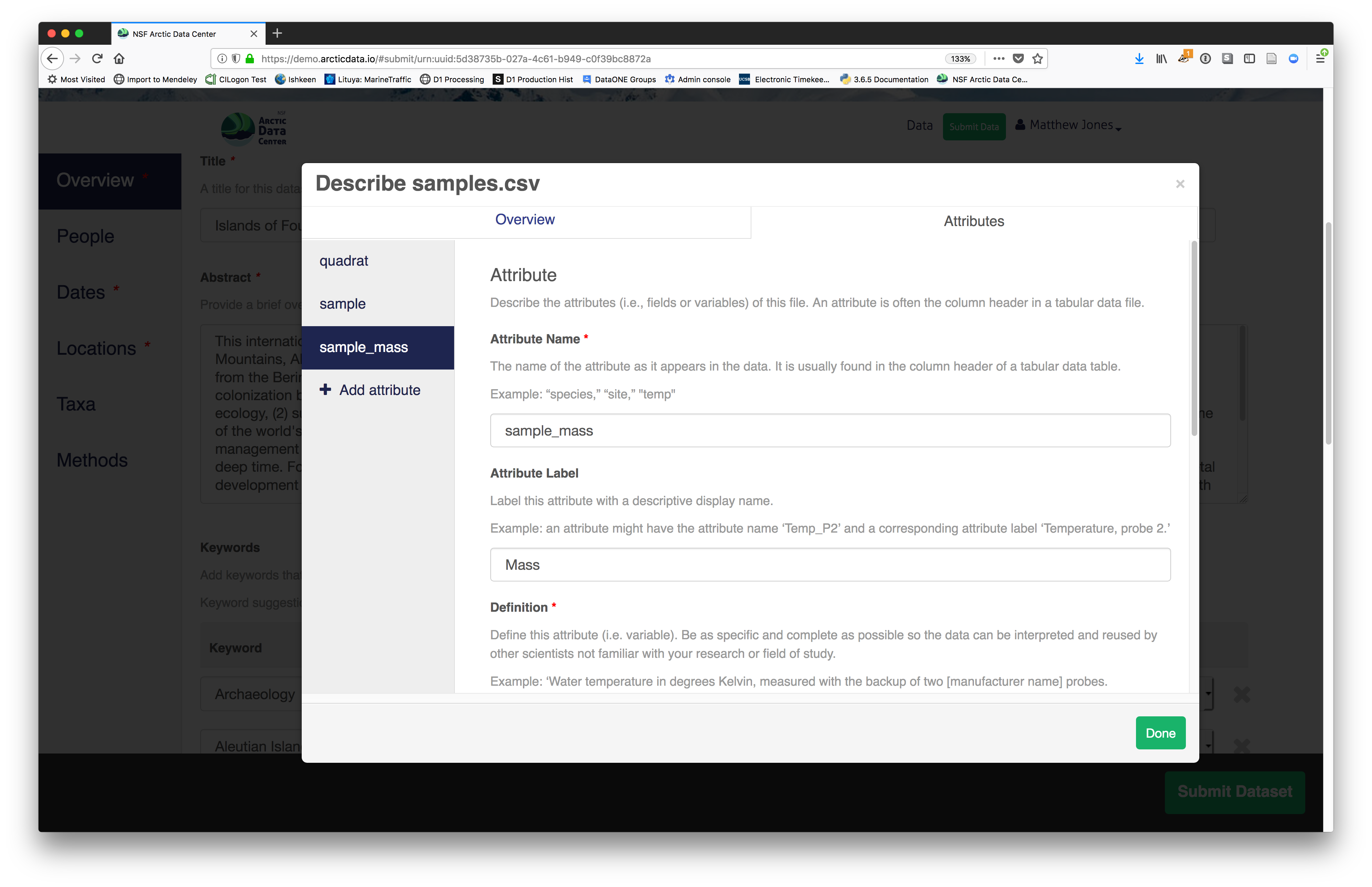

3.5.4 File and variable level metadata

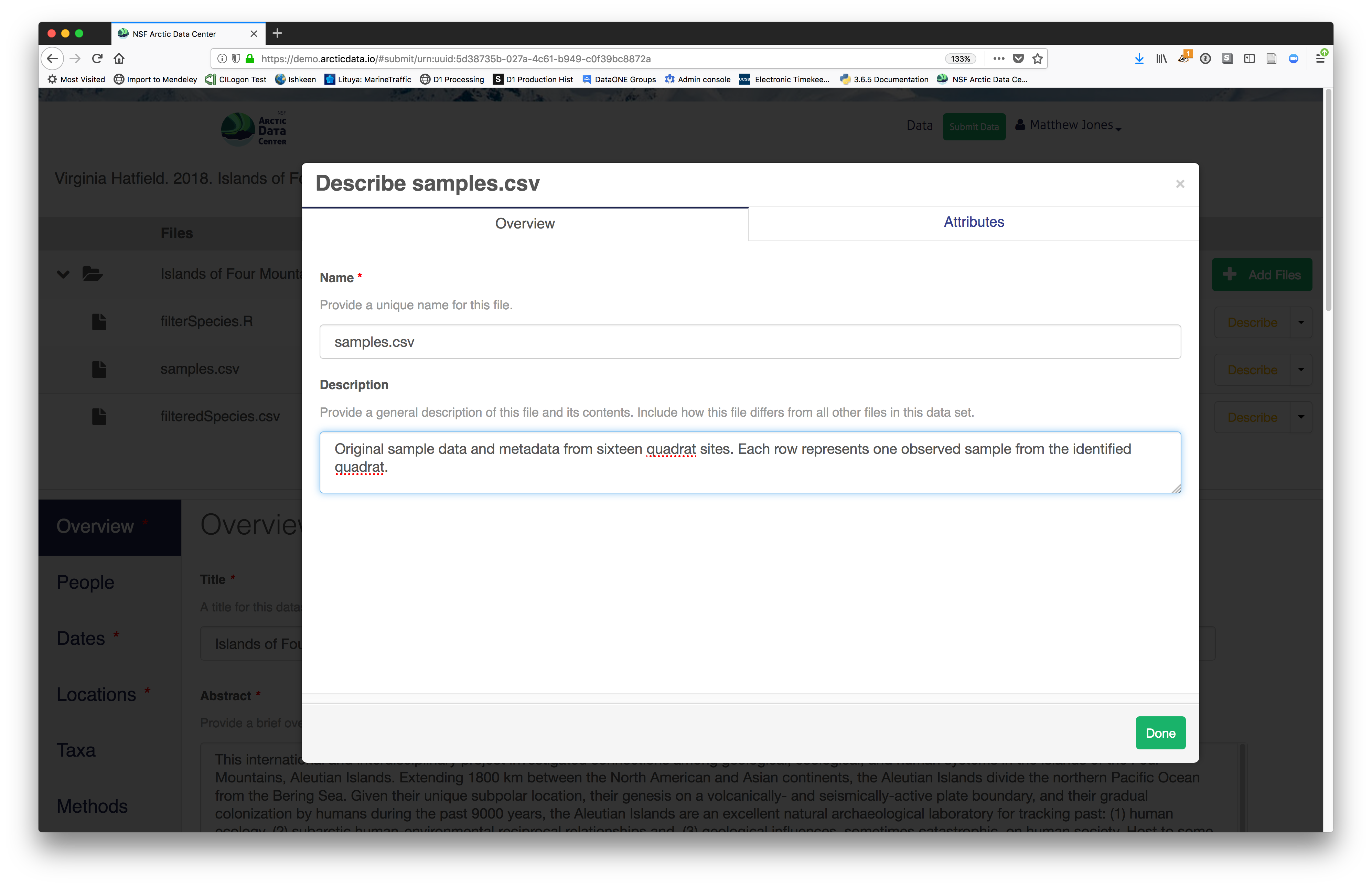

The final major section of metadata concerns the structure and content of your data files. In this case, provide the names and descriptions of the data contained in each file, as well as details of their internal structure.

For example, for data tables, you’ll need the name, label, and definition of each variable in your file. Click the Describe button to access a dialog to enter this information.

The Attributes tab is where you enter variable (aka attribute) information, including:

The Attributes tab is where you enter variable (aka attribute) information, including:

- variable name (for programs)

- variable label (for display)

- variable definition (be specific) - type of measurement

- variable definition (be specific) - type of measurement  - units & code definitions

- units & code definitions

You’ll need to add these definitions for every variable (column) in the file. When done, click Done.

You’ll need to add these definitions for every variable (column) in the file. When done, click Done.  Now, the list of data files will show a green checkbox indicating that you have fully described that file’s internal structure. Proceed with the other CSV files, and then click Submit Dataset to save all of these changes.

Now, the list of data files will show a green checkbox indicating that you have fully described that file’s internal structure. Proceed with the other CSV files, and then click Submit Dataset to save all of these changes.

After you get the big green success message, you can visit your dataset and review all of the information that you provided. If you find any errors, simply click Edit again to make changes.

After you get the big green success message, you can visit your dataset and review all of the information that you provided. If you find any errors, simply click Edit again to make changes.

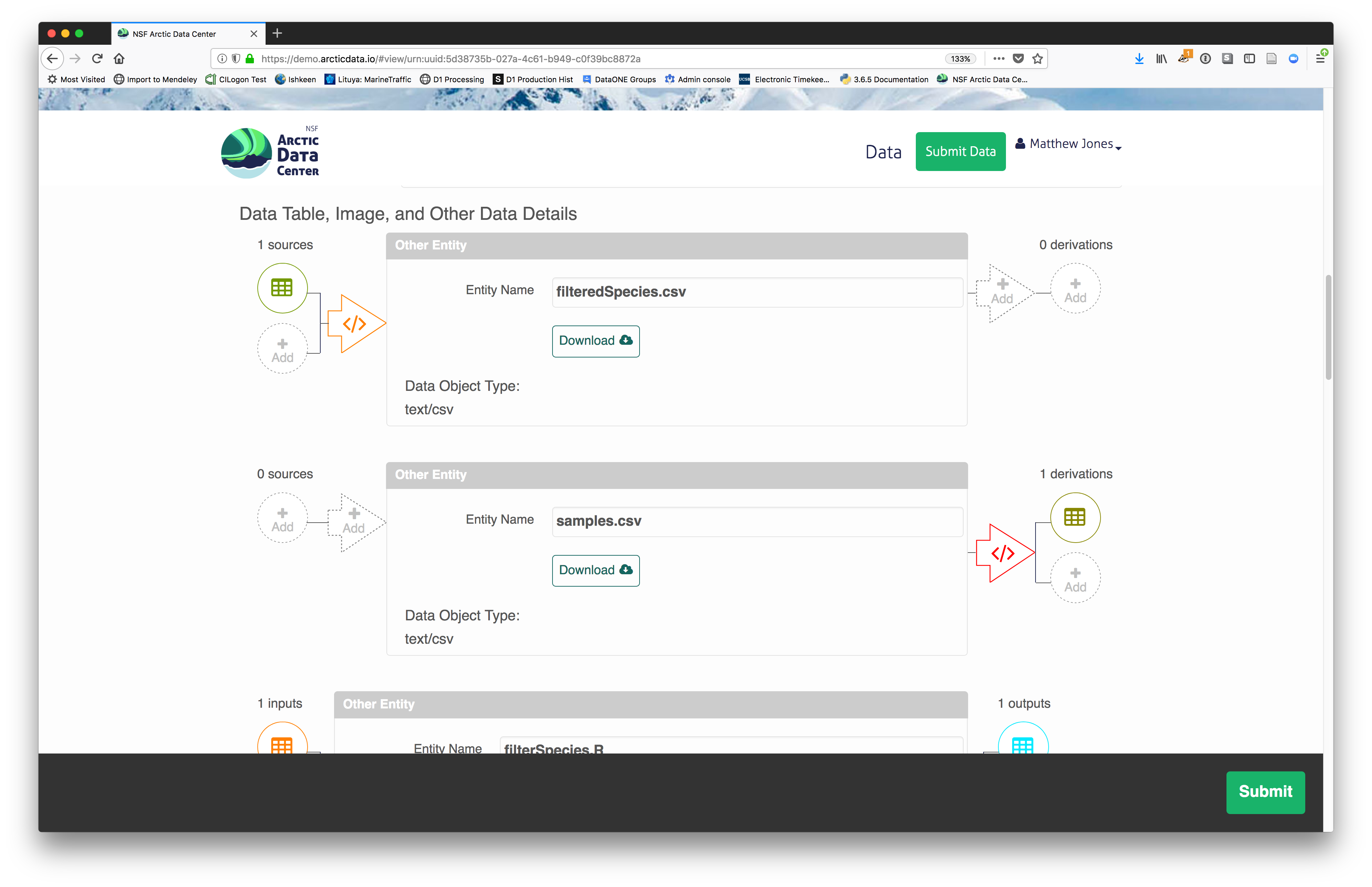

3.5.5 Add workflow provenance

Understanding the relationships between files (aka provenance) in a package is critically important, especially as the number of files grows. Raw data are transformed and integrated to produce derived data, which are often then used in analysis and visualization code to produce final outputs. In the DataONE network, we support structured descriptions of these relationships, so researchers can see the flow of data from raw data to derived to outputs.

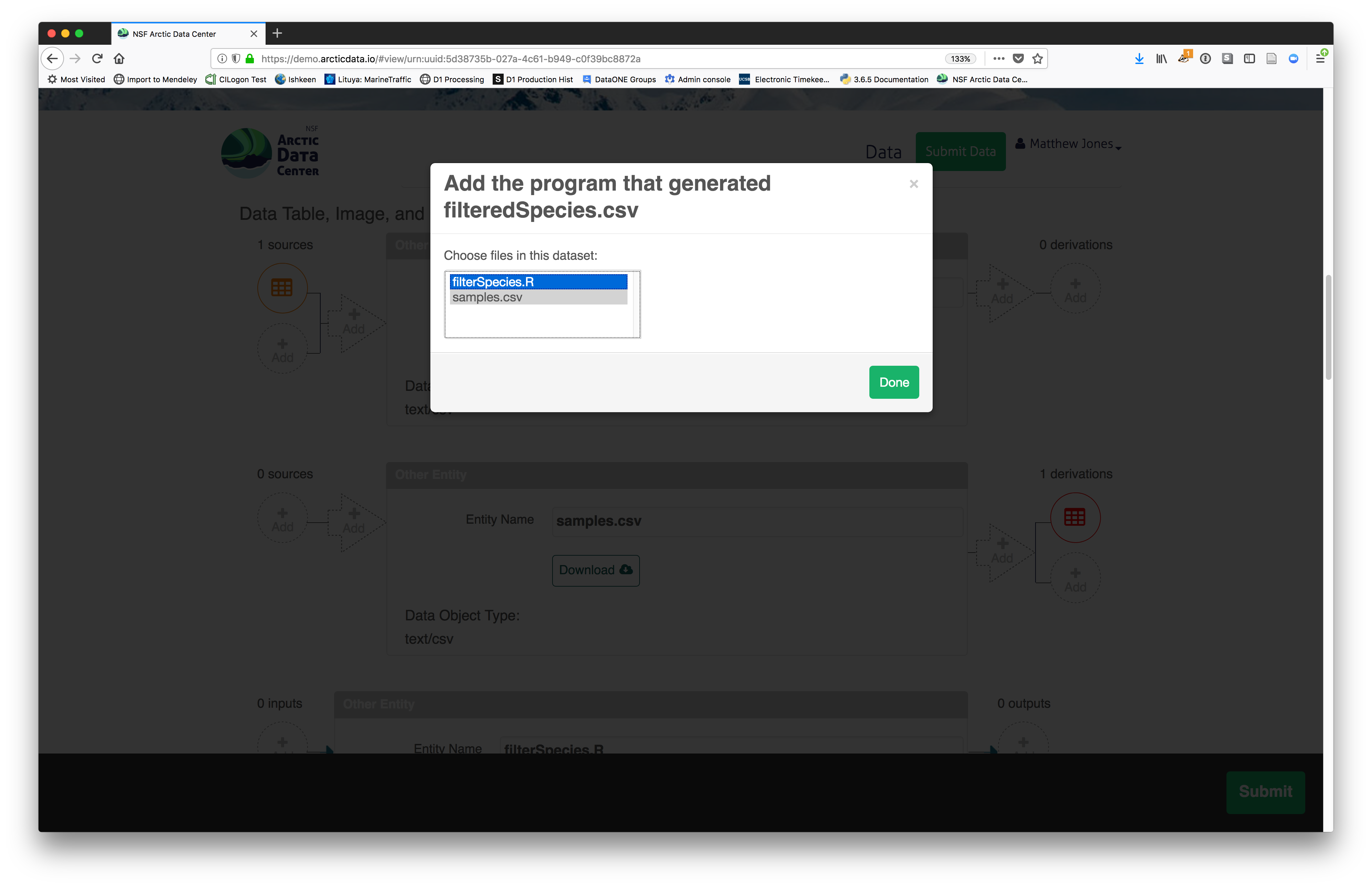

You add provenance by navigating to the data table descriptions and selecting the Add buttons to link the data and scripts that were used in your computational workflow. On the left side, select the Add circle to add an input data source to the filteredSpecies.csv file. This starts building the provenance graph to explain the origin and history of each data object.

The linkage to the source dataset should appear.

The linkage to the source dataset should appear.

Then you can add the link to the source code that handled the conversion between the data files by clicking on Add arrow and selecting the R script:

The diagram now shows the relationships among the data files and the R script, so click Submit to save another version of the package.

The diagram now shows the relationships among the data files and the R script, so click Submit to save another version of the package.

Et voilà! A beautifully preserved data package!

Et voilà! A beautifully preserved data package!

3.6 The FAIR and CARE Principles

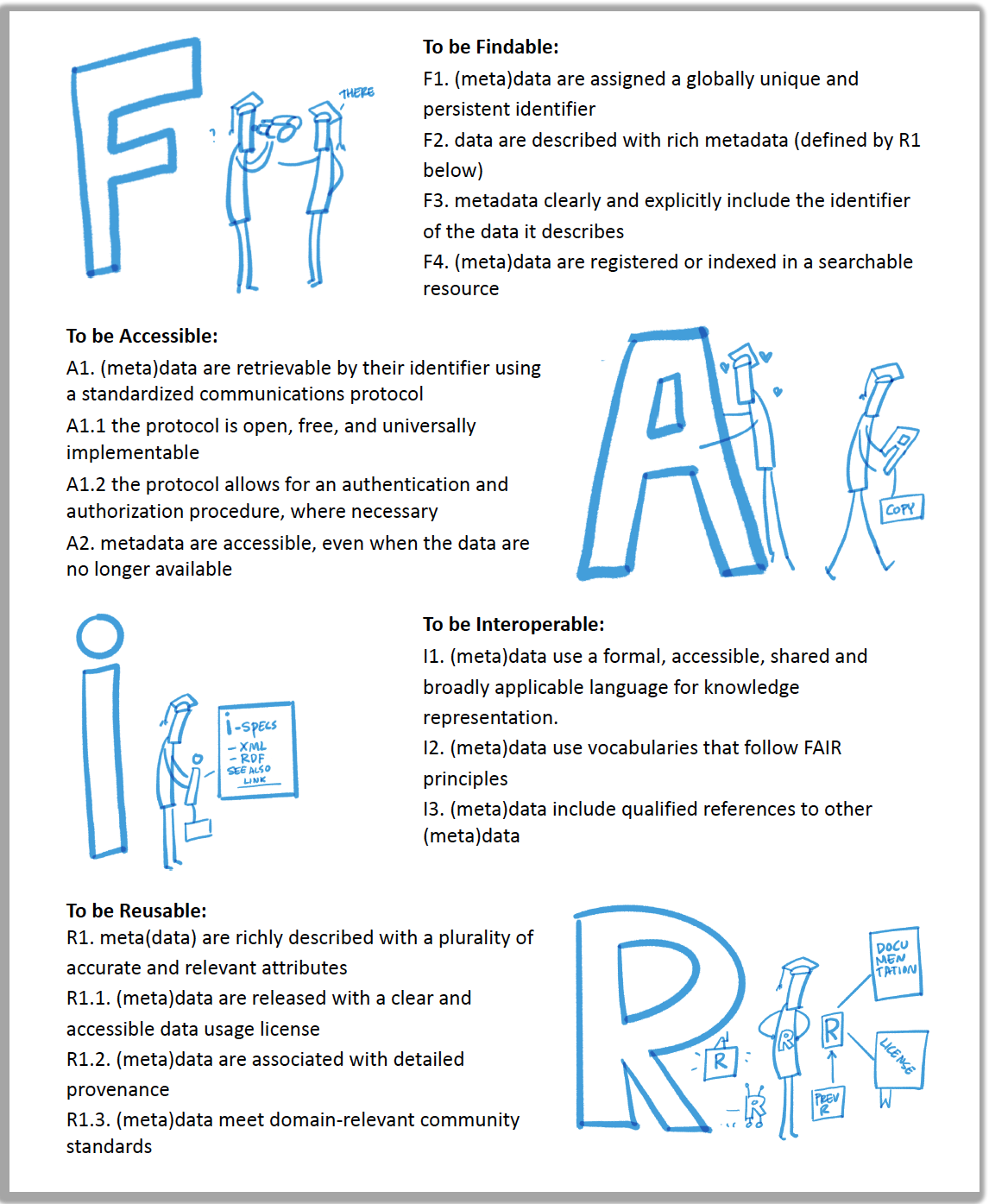

The idea behind these principles is to increase access and usage of complex and large datasets for innovation, discovery, and decision-making. This means making data available to machines, researchers, Indigenous communities, policy makers, and more.

With the need to improve the infrastructure supporting the reuse of data, a group of diverse stakeholders from academia, funding agencies, publishers and industry came together to jointly endorse measurable guidelines that enhance the reusability of data (Wilkinson et al. (2016)). These guidelines became what we now know as the FAIR Data Principles.

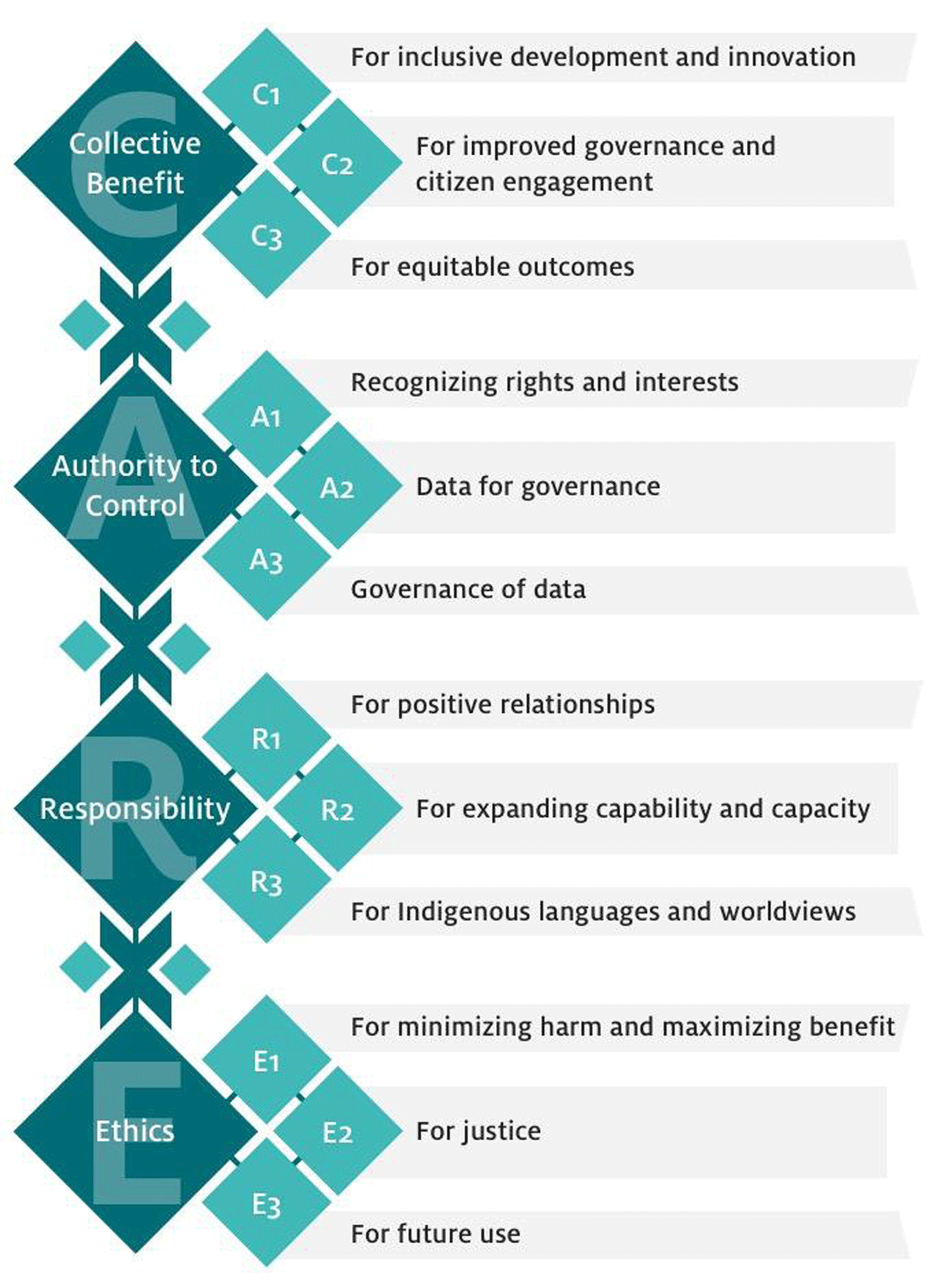

Following the discussion about FAIR and incorporating activities and feedback from the Indigenous Data Sovereignty network, the Global Indigenous Data Alliance developed the CARE principles (Carroll et al. (2021)). The CARE principles for Indigenous Data Governance complement the more data-centric approach of the FAIR principles, introducing social responsibility to open data management practices.

Together, these two principle encourage us to push open and other data movements to consider both people and purpose in their advocacy and pursuits. The goal is that researchers, stewards, and any users of data will be FAIR and CARE (Carroll et al. (2020)).

3.6.1 What is FAIR?

With the rise of open science and more accessible data, it is becoming increasingly important to address accessibility and openness in multiple ways. The FAIR principles focuses on how to prepare your data so that it can be reused by others (versus just open access of research outputs). In 2016, the data stewardship community published principles surrounding best practices for open data management, including FAIR. FAIR stands for Findable, Accessible, Interoperable, and Reproducible. It is best to think about FAIR as a set of comprehensive standards for you to use while curating your data. And each principle of FAIR can be translated into a set of actions you can take during the entire lifecycle of research data management.

| FAIR | Definition |

|---|---|

| (F) Findable | Metadata and data should be easy to find for both humans and computers. |

| (A) Accessible | Once someone finds the required data, they need to know how the data can be accessed. |

| (I) Interoperable | The data needs to be easily integrated with other data for analysis, storage, and processing. |

| (R) Reusable | Data should be well-described so they can be reused and replicated in different settings. |

3.6.2 FAIR Principles in Practice

This is not an exhaustive list of actions for applying FAIR Principles to your research, but these are important big picture concepts you should always keep in mind. We’ll be going through the resources linked below so that you know how to use them in your own work.

- It’s all about the metadata. To make your data and research as findable and as accessible as possible, it’s crucial that you are providing rich metadata. This includes, using a field-specific metadata standard (i.e. EML or Ecological Metadata Language for earth and environmental sciences), adding a globally unique identifier (i.e. a Digital Object Identifier) to your datasets, and more. As discussed earlier, quality metadata goes a long way in making your data FAIR. One tool to help implement FAIR principles to non-FAIR data is the FAIRification process. This workflow was developed by GoFAIR, a self-governed initiative that aims to help implement the FAIR data principles.

- Assess the FAIRness of your research. The FAIR Principles are a lens to apply to your work. And it’s important to ask yourself questions about finding and accessing your data, about how machine-readable your datasets and metadata are, and how reusable it is throughout the entirety of your project. This means you should be re-evaluating the FAIRness of your work over and over again. One way to check the FAIRness of your work, is to use tools like FAIR-Aware and the FAIR Data Maturity Model. These tools are self-assessments and can be thought of as a checklists for FAIR and will provide guidance if you’re missing anything.

- Make FAIR decisions during the planning process. You can ensure FAIR Principles are going to implemented in your work by thinking about it and making FAIR decisions early on and throughout the data life cycle. As you document your data always keep in mind the FAIR lense.

3.6.3 What is CARE?

The CARE Principles for Indigenous Data Governance were developed by the International Indigenous Data Sovereignty Interest Group in consultation with Indigenous Peoples, scholars, non-profit organizations, and governments (Carroll et al. (2020)). They address concerns related to the people and purpose of data. It advocates for greater Indigenous control and oversight in order to share data on Indigenous Peoples’ terms. These principles are people and purpose-oriented, reflecting the crucial role data have in advancing Indigenous innovation and self-determination. CARE stands for Collective benefits, Authority control, Responsibility and Ethics. It details that the use of Indigenous data should result in tangible benefits for Indigenous collectives through inclusive development and innovation, improved governance and citizen engagement, and result in equitable outcomes.

| CARE | Definition |

|---|---|

| (C) Collective Benefit | Data ecosystems shall be designed and function in ways that enable Indigenous Peoples to derive benefit from the data. |

| (A) Authority to Control | Indigenous Peoples’ rights and interests in Indigenous data must be recognized and their authority to control such data be empowered. Indigenous data governance enables Indigenous Peoples and governing bodies to determine how Indigenous Peoples, as well as Indigenous lands, territories, resources, knowledge and geographical indicators, are represented and identified within data. |

| (R) Responsibility | Those working with Indigenous data have a responsibility to share how those data are used to support Indigenous Peoples’ self-determination and collective benefit. Accountability requires meaningful and openly available evidence of these efforts and the benefits accruing to Indigenous Peoples. |

| (E) Ethics | Indigenous Peoples’ rights and well being should be the primary concern at all stages of the data life cycle and across the data ecosystem. |

3.6.4 CARE Principles in Practice

Make your data access to Indigenous groups. Much of the CARE Principles are about sharing and making data accessible to Indigenous Peoples. To do so, consider publish your data on Indigenous founded data repositories such as:

Use Traditional Knowledge (TK) and Biocultural (BC) Labels How do we think of intellectual property for Traditional and Biocultural Knowledge? Knowledge that outdates any intellectual property system. In many cases institution, organizations, outsiders hold the copy rights of this knowledge and data that comes from their lands, territories, waters and traditions. Traditional Knowledge and Biocultural Labels are digital tags that establish Indigenous cultural authority and governance over Indigenous data and collections by adding provenance information and contextual metadata (including community names), protocols, and permissions for access, use, and circulation. This way mark cultural authority so is recorded in a way that recognizes the inherent sovereignty that Indigenous communities have over knowledge. Giving Indigenous groups more control over their cultural material and guide users what an appropriate behavior looks like. A global initiative that support Indigenous communities with tools that attribute their cultural heritage is Local Contexts.

Assess the CAREness of your research. Like FAIR, CARE Principles are a lens to apply to your work. With CARE, it’s important to center human well-being in addition to open science and data sharing. To do this, reflect on how you’re giving access to Indigenous groups, on who your data impacts and the relationships you have with them, and the ethical concerns in your work. The Arctic Data Center, a data repository for Arctic research, now requires an Ethical Research Practices Statement when submitting data to them. They also have multiple guidelines on how to write and what to include in an Ethical Research Practices Statement.

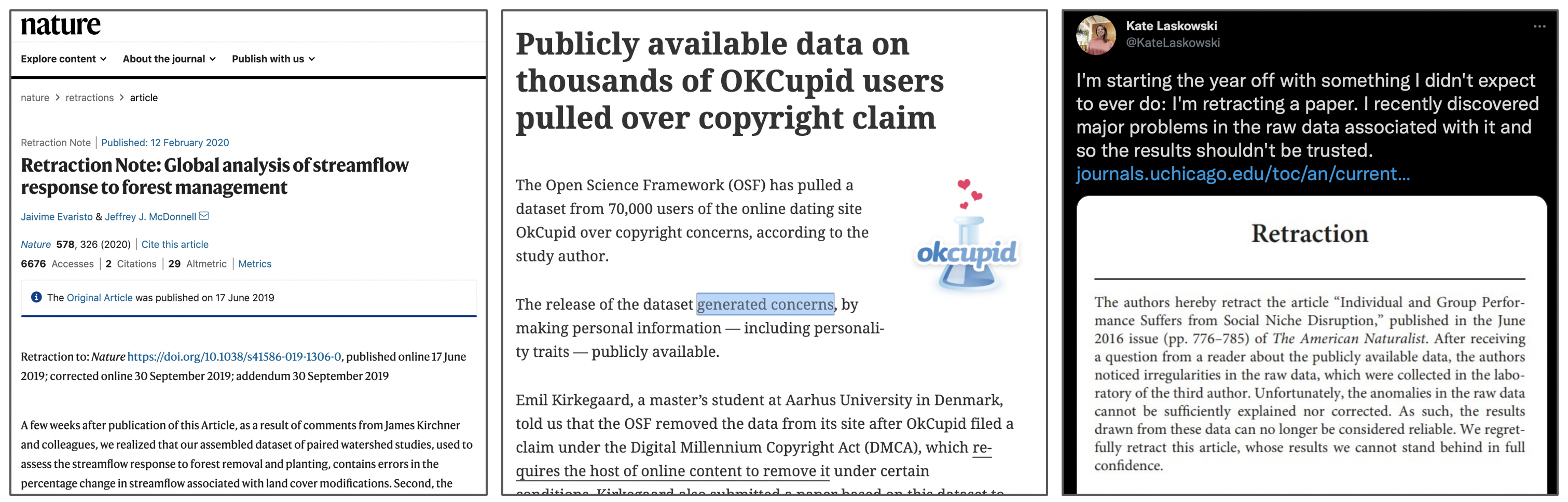

3.7 Research Data Publishing Ethics

For over 20 years, the Committee on Publication Ethics (COPE) has provided trusted guidance on ethical practices for scholarly publishing. The COPE guidelines have been broadly adopted by academic publishers across disciplines, and represent a common approach to identify, classify, and adjudicate potential breaches of ethics in publication such as authorship conflicts, peer review manipulation, and falsified findings, among many other areas. Despite these guidelines, there has been a lack of ethics standards, guidelines, or recommendations for data publications, even while some groups have begun to evaluate and act upon reported issues in data publication ethics.

To address this gap, the Force 11 Working Group on Research Data Publishing Ethics was formed as a collaboration among research data professionals and the Committee on Publication Ethics (COPE) “to develop industry-leading guidance and recommended best practices to support repositories, journal publishers, and institutions in handling the ethical responsibilities associated with publishing research data.” The group released the “Joint FORCE11 & COPE Research Data Publishing Ethics Working Group Recommendations” (Puebla, Lowenberg, and WG 2021), which outlines recommendations for four categories of potential data ethics issues:

- Authorship and Contribution Conflicts

- Authorship omissions

- Authorship ordering changes / conflicts

- Institutional investigation of author finds misconduct

- Legal/regulatory restrictions

- Copyright violation

- Insufficient rights for deposit

- Breaches of national privacy laws (GPDR, CCPA)

- Breaches of biosafety and biosecurity protocols

- Breaches of contract law governing data redistribution

- Risks of publication or release

- Risks to human subjects

- Lack of consent

- Breaches of himan rights

- Release of personally identifiable information (PII)

- Risks to species, ecosystems, historical sites

- Locations of endangered species or historical sites

- Risks to communities or societies

- Data harvested for profit or surveillance

- Breaches of data sovereignty

- Risks to human subjects

- Rigor of published data

- Unintentional errors in collection, calculation, display

- Un-interpretable data due to lack of adequate documentation

- Errors of of study design and inference

- Data manipulation or fabrication

Guidelines cover what actions need to be taken, depending on whether the data are already published or not, as well as who should be involved in decisions, who should be notified of actions, and when the public should be notified. The group has also published templates for use by publishers and repositories to announce the extent to which they plan to conform to the data ethics guidelines.

3.8 Exercise: Evaluate a Data Package on the ADC Repository

Explore data packages published on the ADC assess the quality of their metadata. Imagine you’re a data curator!

- Break into groups and use the following data packages:

- Group A: ADC Data Portal White spruce (Picea glauca) densities at Brooks Range treelines, Alaska (2019-2022)

- Group B: ADC Data Portal A 2022 household survey about impacts and human response to climate-related multi-hazards in Anchorage, Fairbanks, and Whitehorse

- Group C: ADC Data Portal Summertime water quality measurements at beaver ponds and associated locations in Arctic Alaska, 2022-2026

You and your group will evaluate a data package for its: (1) metadata quality, (2) data documentation quality for reproducibility, and (3) FAIRness and CAREness.

- View our Data Package Assessment Rubric and make a copy of it to:

- Investigate the metadata in the provided data

- Does the metadata meet the standards we talked about? How so?

- If not, how would you improve the metadata based on the standards we talked about?

- Investigate the overall data documentation in the data package

- Is the documentation sufficient enough for reproducibility? Why or why not?

- If not, how would you improve the data documentation? What’s missing?

- Identify elements of FAIR and CARE

- Is it clear that the data package used a FAIR and CARE lens?

- If not, what documentation or considerations would you add?

- Investigate the metadata in the provided data

- Elect someone to share back to the group the following:

- How easy or challenging was it to find the metadata and other data documentation you were evaluating? Why or why not?

- What documentation stood out to you? What did you like or not like about it?

- How well did these data packages uphold FAIR and CARE Principles?

- Do you feel like you understand the research project enough to use the data yourself (aka reproducibility?